This New Tool Makes AI’s Role in Student Writing Visible

Apr 15, 2026 —

How DraftMarks works

Generative artificial intelligence (AI) has transformed college writing. As paper drafts are increasingly co‑written with AI, professors are left wondering not whether students are using AI, but how.

A 2025 AI in Education trend report found that 90% of college students use AI in their coursework, with nearly half using it during the drafting process. As AI becomes embedded in everyday writing, traditional tools like Grammarly or Turnitin for evaluating student learning fall short. If AI is to be expected in most student writing, then merely detecting its presence isn’t enough.

DraftMarks, a new open‑source tool developed by Georgia Tech and Stanford researchers, makes the writing process itself visible. Instead of trying to assess how much of a finished document was written by AI, DraftMarks shows where a student iterated with AI prompts, what is fully AI, and how a piece evolved — illuminating the often-invisible collaboration between human writers and AI.

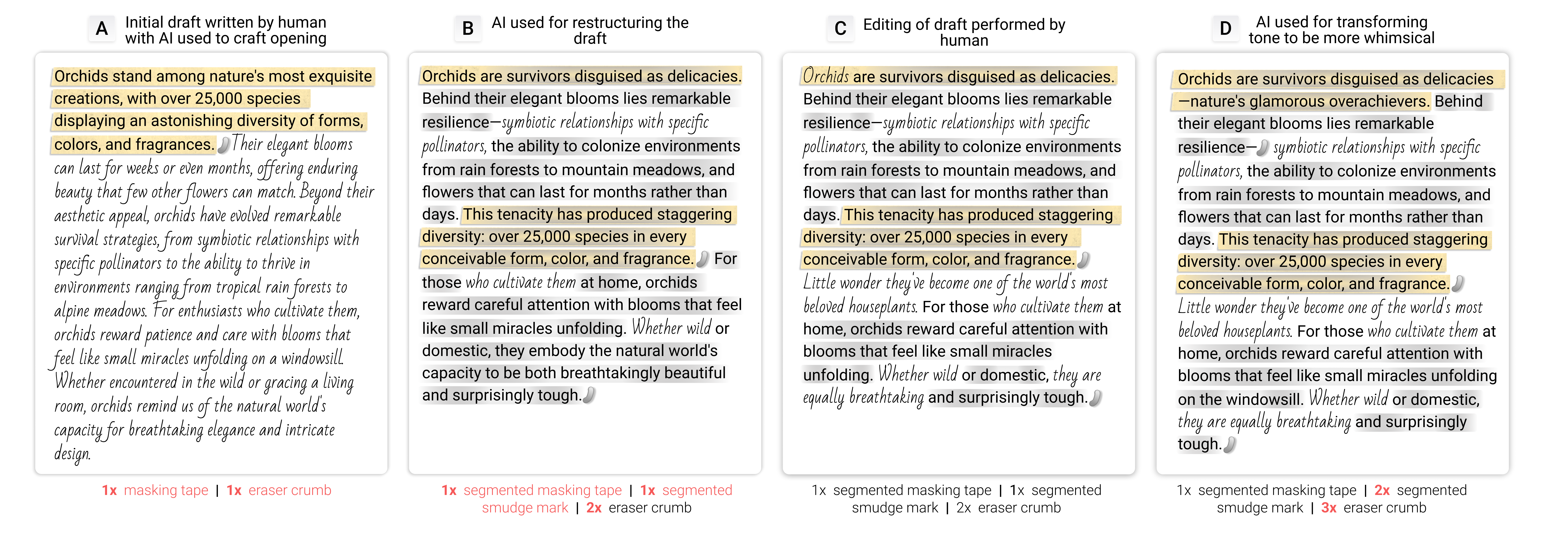

Functioning as an augmented reading tool, DraftMarks layers visual cues directly onto a document to indicate different kinds of AI involvement. Eraser crumbs mark heavily revised passages. Smudges signal AI-generated changes in the strength of the argument rather than content changes. Masking tape highlights passages initially generated by AI. Glue residue shows where AI‑generated text was later removed. Ghost text indicates when a writer prompted AI but chose not to use the output. Different fonts distinguish between human‑written and AI‑generated passages.

Together, the marks don’t just reveal AI’s presence. They tell a story about the writer’s process.

“By making the invisible parts of the process tangible, it forces writers to confront whether they are truly engaging with AI or just passively accepting it,” said Momin Siddiqui, a master’s student in the College of Computing and lead author on the project. “Ultimately, it helps writers make more intentional judgment calls about how they want to collaborate with AI in the future.”

The researchers debuted DraftMarks at the Association for Computing Machinery’s Conference on Human Factors in Computing Systems in Barcelona in April.

Designing for Educators

Rather than starting with detection algorithms, the researchers began with educators. In an initial 21-person study, they observed how instructors reviewed student writing and what cues they looked for when assessing learning, revision, and originality. Those insights informed the design of DraftMarks’ visual language, which deliberately mimics physical artifacts of writing — eraser debris, tape, smudges — to reflect processes instructors already recognize.

“These marks are meant to emulate the writing process in ways we’re already familiar with,” said Adam Coscia, a computing Ph.D. student. “They help students and teachers see the effort behind the writing, and whether students actually met the learning objective.”

Behind the scenes, DraftMarks tracks a document’s draft history and classifies different types of edits and AI interactions as they happen, allowing the visual cues to appear almost in real time.

Reading DraftMarks

To evaluate how the tool functions beyond the lab, the team conducted a follow‑up study with 70 participants, including students, teachers, journalists, and general readers. Their reactions to reviewing a DraftMarks-annotated document varied in revealing ways.

Instructors were most interested in seeing the writing process unfold: how ideas developed, how heavily AI was used, and where students exercised judgment. General readers, meanwhile, used the marks to assess something less measurable but equally important — trust. For them, DraftMarks offered cues about authorial intent and authenticity, helping readers decide how much confidence to place in a piece of writing.

A Shift From Detection to Reflection

Unlike AI detectors that merely offer a percentage, DraftMarks is designed to prompt reflection from writers and readers.

“DraftMarks completely changed how I think about my own writing,” Coscia said. “I was surprised by how much I cared about authorial intent once I could actually see how AI affected my tone. It made me realize small AI choices can subtly reshape what I’m trying to say.”

As AI continues to reshape how writing happens, the research team hopes DraftMarks will help shift the conversation toward transparency. Tools like this could offer educators and students a clearer window into how learning happens when humans and AI write together.

This work is funded through the AI Research Institutes program by the National Science Foundation and the Institute of Education Sciences, U.S. Department of Education.

CITATION: Momin N. Siddiqui, Nikki Nasseri, Adam J. Coscia, Roy Pea, and Hari Subramonyam. 2026. DraftMarks: Enhancing Transparency in Human-AI Co-Writing Through Interactive Skeuomorphic Process Traces. In Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI '26). Association for Computing Machinery, New York, NY, USA, Article 862, 1–22.

Tess Malone, Senior Research Writer/Editor

tess.malone@gatech.edu