Finding Their Groove

Researchers from Georgia Tech and Kennesaw State partner to bring musicians, dancers, and robots together for disruptive collaboration while improving human-robot trust

The impact of robots on human life is evident everywhere, from service sectors such as healthcare and retail, to industrial settings like automobile manufacturing. But it’s one thing to leverage robots as helpers — it’s another to connect with them emotionally, which could lead to better trust.

Researchers at the Georgia Institute of Technology found that embedding emotion-driven sounds and gestures in robotic arms help establish trust and likability between humans and their AI counterparts. They have explored these connections not only in the lab but also on the live-performance stage in a unique collaboration with Kennesaw State University.

With National Science Foundation funding, Georgia Tech music technology researchers have programmed a “FOREST” of improvising robot musicians and dancers who interact with human partners. The results of their research have been conditionally accepted for publication in the open-access journal, Frontiers in Robotics and AI.

“It was fascinating to see how choreographers and dancers who are not surrounded by technology on a daily basis learn to trust and slowly build a relationship with our robotic artists,” said Gil Weinberg, founder and director of the Georgia Tech Center for Music Technology in the College of Design and the principal investigator on the project. “When you bring this kind of interesting, interdisciplinary work together and merge different disciplines, it hopefully will inspire new advances in the fields of dance, music, and robotics.”

Georgia Tech hopes to take the FOREST performance to the global stage, first in Atlanta with a free concert at Georgia Tech’s campus on Dec. 11 featuring student work, then in Israel and other locations to be announced.

According to the researchers, the FOREST robots and accompanying musical robots are not rigid mimickers of human melody and movement. Rather, they exhibit a remarkable level of emotional expression and human-like gesture fluency — what the researchers call “emotional prosody and gesture” to project emotions and build trust.

“The big thing we really wanted to focus on was capturing natural emotion,” said music technology graduate student Amit Rogel. “One of my main challenges was to make the robot look as if it's moving like a human.”

Rogel designed a new framework to guide the robots’ emotion-driven motions based on human gestures. Donning a motion capture suit and recording body movements, he mapped each movement to different degrees of freedom. He and the other researchers soon learned that as one part of the body moves, other body parts make small corrections.

“We tried modeling just how a human would follow after adding a slight movement so when you change different parameters on each joint, it will react to a primary joint moving.”

The team then scaled it into a more lifelike, dance-like scenario that would capture the fluid motion in each robot.

The other part of the FOREST project involved getting robots to project emotion through sound.

“The idea for my research came from the question, ‘How can robots talk without language?’” recalled Richard Savery, a 2021 Georgia Tech doctoral graduate of the music technology program who assisted in writing the original NSF proposal.

Weinberg explained that the team developed a new machine learning architecture to generate audio based on a newly created dataset of emotionally labeled singing phrases. These Emotional Musical Prosody (EMP) phrases were embedded in the robots to improve human-robot trust. The researchers then evaluated the robots’ non-verbal emotional reactions using three robotic platforms.

The FOREST project builds on earlier Georgia Tech work focused on giving robots the ability to sing and compose music. The most famous of these performing robots is Shimon, who has engaged in rap battles with musician Dashill A. Smith, a long-time collaborator with the program. Shimon, who has his own Spotify account, composes and performs his own lyrics and rhymes to counter Smith’s hip-hop lines.

With Shimon and Smith, “It’s been great. They're interacting and developing a vocabulary together,” said Savery, adding, “I was really interested in this idea of improvising with a human, and most of that collaboration happens when you’re on stage. Performing with a person includes emotions — your co-performer will be happy if you play well. Having that vibe from the robot makes it feel much more like an actual collaborator.”

Partnering with Dancers from Kennesaw State University

Now with FOREST in production, the Georgia Tech team has come full circle, bringing intuitive robots who can respond to music and movements from human partners, provided by a group of Kennesaw State University dance alumni.

FOREST choreographer Ivan Pulinkala noted how quickly the dancers socialized and became comfortable with the robots.

“We worked through a series of functional modalities with the dancers physically manipulating the robotic arms. The dancers became more socialized and developed an interpersonal connection with the robotic arms,” said Pulinkala, who holds dual roles of professor of dance and interim provost & vice president for Academic Affairs at Kennesaw State University.

Pulinkala considers FOREST a powerful example of “disruptive collaboration”― a term he’s coined for bringing in new artistic modalities to challenge the traditional choreographic process. Conventional choreography creates movement and then collaborators support that movement in different ways.

“This is the reverse ― the robots were here first, and we choreographed their movement. Then, the dancers responded choreographically to the movement and functionality of the robots,” he said.

Pulinkala will highlight the FOREST project during his Dec. 3 live stream interview as part of Kennesaw State University’s Research With Relevance – Friday Features, a series spotlighting the research of college deans and faculty.

Weinberg said mastering the precision and timing of the robots’ movements has far-ranging implications in fields like aerospace.

“By addressing the challenges of timing and accuracy in music, and now in dance, you pretty much address it for any other domain, because music is so time demanding and so accurate,” he said.

He predicted that the algorithms his team has developed could be adapted for extreme environments such as in-orbit repairs using the robotic arm on board the International Space Station.

Ultimately, the Georgia Tech and Kennesaw collaborators hope that enhancing the human-robot interplay could inspire new forms of artistic expression while enhancing trust between humans and robotic platforms.

“There's real potential for changing the way we interact with robots much more broadly than just at a musical level but also emotionally by rethinking the dynamic and how robots communicate,” Savery said.

The Georgia Tech FOREST performance will be held Saturday, Dec. 11, at 7:30 p.m. at the Caddell Building Flex Space, which features wide glass windows for comfortable viewing from outside, weather permitting. For more information, visit the School of Music’s FOREST event page.

Kennesaw State University dance alumni Christina Massad and Darvensky Louis interact with one of the FOREST robots on stage (Photo credit: Gioconda Barral-Secchi, IGNI Productions)

Connecting emotionally: Georgia Tech’s interactive FOREST robots perform with Kennesaw State University dancers Christina Massad, Darvensky Louis, Ellie Olszeski, Bekah Crosby, and Bailey Harbaugh (Photo credit: Gioconda Barral-Secchi, IGNI Productions)

Media Relations Contact and Writer: Anne Wainscott-Sargent (404-435-5784) (asargent7@gatech.edu)

Georgia Tech Music Technology graduate student Amit Rogel (foreground, right) consults with other FOREST project researchers (L to R) Mohammad Jafari, Michael Verma and Rose Sun. (Photo credit: Allison Carter, Georgia Tech)

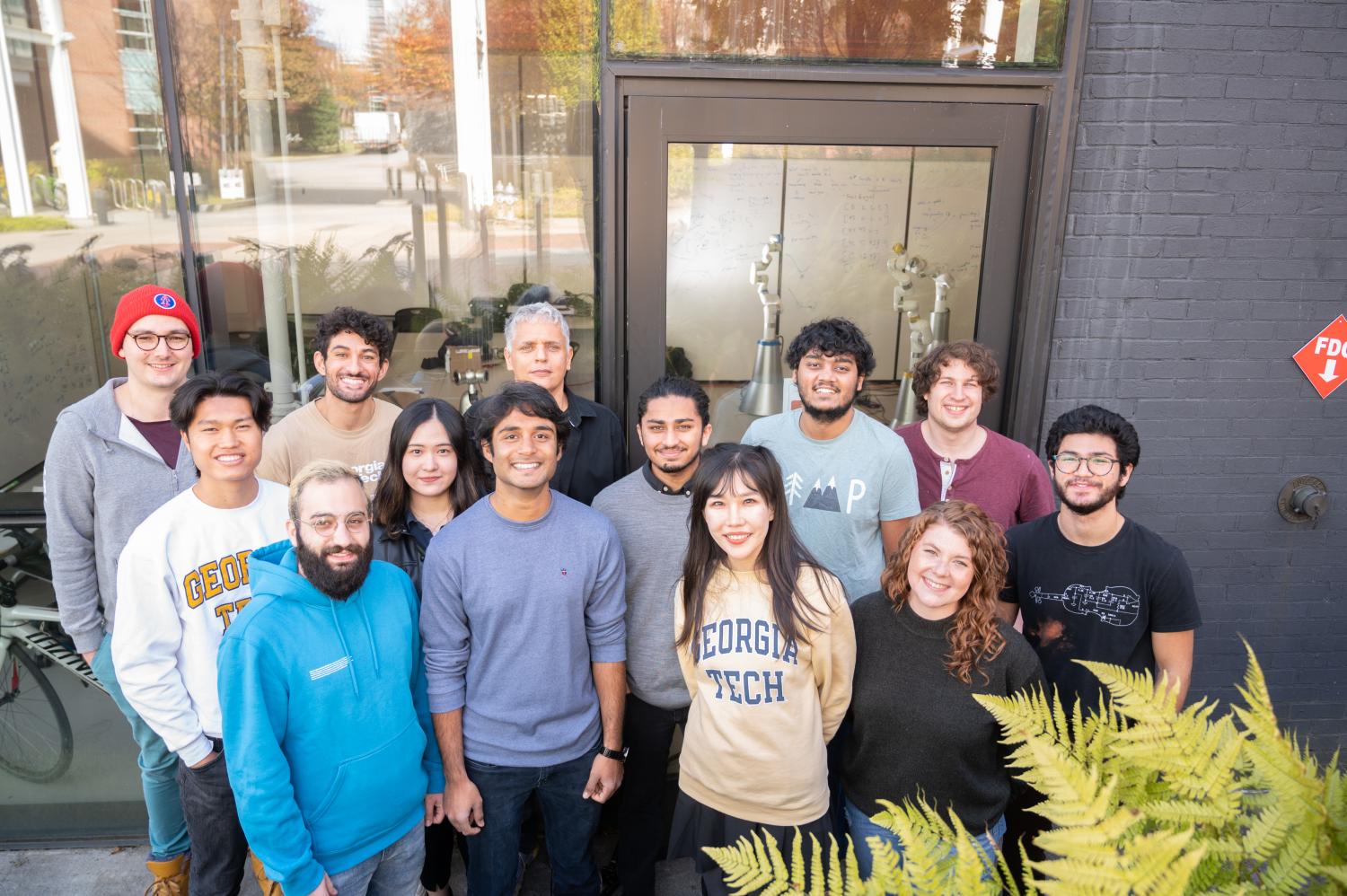

The Georgia Tech Center for Music Technology research team behind FOREST (L to R) (Back row): Richard Savery, Michael Verma, Gil Weinberg, Raghav Sankaranarayanan, and Amit Rogel. (Middle row): Jumbo Jiahe, Rose Sun, Nikhil Krishnan, and Sean Levine. (Front row): Mohammad Jafari, Nitin Hugar, Qinying Lei, and Jocelyn Kavanagh. (Photo credit: Allison Carter, Georgia Tech)