A Nexus of Ideas

Sep 11, 2025 —

Graphic Representation of networked system: Adobe Stock

The recently awarded $20mil NSF Nexus Supercomputer grant to Georgia Tech and partner institutes promises to bring incredible computing power to the CODA building. But what makes this supercomputer different and how will it impact research in labs on campus, across disciplinary units, and across institutions?

Purpose Built for AI Discovery

Nexus is Georgia Tech’s next-generation supercomputer, replacing the HIVE. Most operational high-performance computing systems utilized for research were designed before the explosion in Machine Learning and AI. This revolution has already shown successes for scientific research and data analysis in many domains, but the compute power, complex connectivity, and data storage needs for these systems have limited their access to the academic research community. The Nexus supercomputer design process retained a robust HPC system as a base while integrating artificial intelligence, machine learning and large-scale data science analysis from the ground up.

Expert Support for Faculty and Researchers

The Institute for Data Engineering and Science (IDEaS) and the College of Computing house the Center for Artificial Intelligence in Science and Engineering (ARTISAN) group. This team has collective experience in working with national computational, cloud, commercial and institutional resources for computational activities, and decades of experience in scientific tools that aid in assisting both teaching and research faculty. Nexus is the next logical step, bringing together everything they’ve learned to build a national resource optimized for the future of AI-driven science.

Principal Research Scientist for the ARTISAN Team, Suresh Marru, highlighted the need for this new resource, “AI is a core part of the Nexus vision. Today, researchers often spend more time setting up experiments, managing data, or figuring out how to run jobs on remote clusters than doing science. With Nexus, we’re flipping that script. By embedding AI into the platform, we help automate routine tasks, suggest optimal ways to run simulations, and even assist in generating input or analyzing results. This means researchers can move faster from question to insight. Instead of wrestling with infrastructure, they can focus on discovery.”

An Accessible AI Resource for GT & US Scientific Research

90% of Nexus capacity will be made available to the national research community through the NSF Advanced Computing Systems & Services (ACSS) program. Researchers from across the country, at universities, labs, and institutions of all sizes, will have access to this next-generation AI-ready supercomputer. For Georgia Tech research faculty and staff, the new system has multiple benefits:

- 10% of the time on the machine will be available for use by Georgia Tech researchers

- Nexus will allow GT researchers a chance to try out the latest hardware for AI computing

- Thanks to cyberinfrastructure tools from the ARTISAN group, Nexus will be easier to access than previous NSF supercomputers

David Sherrill, Interim Executive Director of IDEaS notes, "Nexus brings Georgia Tech's leadership in research computing to a whole new level. It will be the first NSF Category I Supercomputer hosted on Georgia Tech's campus. The Nexus hardware and software will boost research in the foundations of AI, and applications of AI in science and engineering."

Psychological Fallout: DARPA-Backed Project Addresses Societal Toll of Cyberattacks

Sep 10, 2025 —

The United States has prepared for decades to defend itself from every conceivable military conflict on its shores, but it turns out psychological warfare, not missiles, might pose the greatest threat to national security.

This is a challenge Assistant Professor Ryan Shandler will spend the next two years exploring as a recipient of the Young Faculty Award from the Defense Advanced Research Projects Agency (DARPA).

DARPA uses this award to recognize up-and-coming early-career faculty it hopes to continue working with in the future.

Currently, DARPA is concerned with cyberattacks from foreign countries aimed at provoking social unrest and eroding public trust in democratic institutions. In a study released last year by Microsoft, it was estimated that 600 million cyberattacks were launched everyday by criminals and nation-state actors from July 2023 to July 2024.

Tools built by cybersecurity engineers help mitigate the attacks made by criminals and in some cases even help track down stolen money. However, nation-state actors don’t launch cyberattacks to score a payday.

Instead, they attack things like power plants or voting precincts as a show of strength. Exposing these vulnerabilities shows how unsafe life could be, and these actors want nothing more than to cause total panic.

So now instead looking only to hardware and software for the solution to this problem, DARPA is investing in the human dimension of cybersecurity.

This area has long been a focus of Shandler’s research, making him uniquely qualified to confront this previously overlooked vulnerability. His past experiments have already shown how cyberattacks generate severe public anxiety and prompt calls for physical military retaliation.

For this new project, he will track a controlled population of several thousand people by exposing them to simulated cyberattacks. At no point will the participants be made to think the attacks are real. Shandler and his team will then interview the participants to gauge how their experience impacted their perception of security.

“We are looking to see which groups are more susceptible to this kind of cumulative threat. Once we model the risk, the next step will be building countermeasures to defend against it,” he said.

However, creating a defense system that promotes societal resilience will be as challenging as it is revolutionary.

"I'm fortunate to be conducting this research in an interdisciplinary unit like the School of Cybersecurity and Privacy. Tackling a challenge of this scale requires computer scientists and social scientists working side by side,” Shandler said.

“Alone, neither field stands a chance—but together, we stand a real chance of success."

Shandler is jointly appointed with the School of Cybersecurity and Privacy and the Sam Nunn School of International Affairs.

John Popham Communications Officer II | School of Cybersecurity and Privacy

Georgia Tech Team Designing Robot Guide Dog to Assist the Visually Impaired

Sep 10, 2025 —

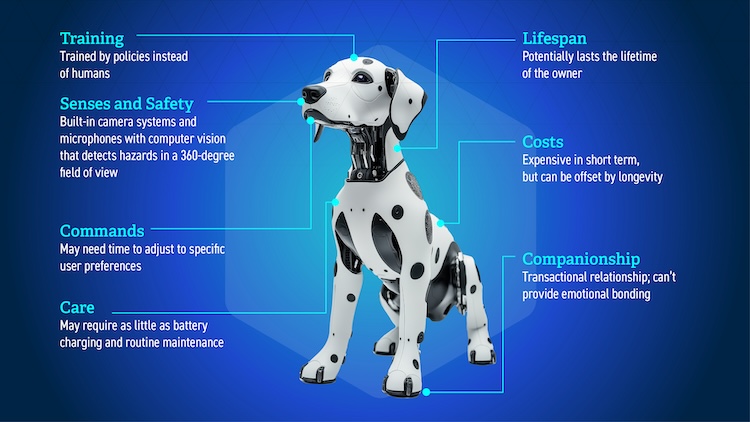

People who are visually impaired and cannot afford or care for service animals might have a practical alternative in a robotic guide dog being developed at Georgia Tech.

Before launching its prototype, a research team within Georgia Tech’s School of Interactive Computing, led by Professor Bruce Walker and Assistant Professor Sehoon Ha, is working to improve its methods and designs based on research within blind and visually impaired (BVI) communities.

“There’s been research on the technical aspects and functionality of robotic guide dogs, but not a lot of emphasis on the aesthetics or form factors,” said Avery Gong, a recent master’s graduate who worked in Walker’s lab. “We wanted to fill this gap.”

With training a guide dog costing up to $50,000, few BVI individuals can afford one, and even fewer can afford to care for and feed it. The dog also has fewer than 10 working years before it needs replacement.

Gong co-authored a paper on the design implications of the robotic guide dog that was presented at the 2025 International Conference on Robotics and Automation (ICRA) in Atlanta in May.

The consensus among the study’s participants indicates they prefer a robotic guide dog that:

- resembles a real dog and appears approachable

- has a clear identifier of being a guide dog, such as a vest

- has built-in GPS and Bluetooth connectivity

- has control options such as voice command

- has soft textures without feeling furry

- has long battery life and self-charging capability

“A lot of people said they didn’t want the dog to look too cute or appealing because it would draw too much attention,” said Aviv Cohav, another lead author of the paper and recent master’s graduate.

“Many people have issues with taking their guide dog to places, whether it’s little kids wanting to play with the dog or people not liking dogs or people being scared of them, and that reflects on the owners themselves. We wanted to look at what would be a good balance between having a functional robot that wouldn’t scare people away or be a distraction.”

The researchers also had to consider the perspectives of sighted individuals and how society at large might view a robotic guide dog.

An example of this is the amount of noise the dog makes while walking. The owner needs to hear the dog is active, but the clanky sound many off-the-shelf robots make could create disturbances in indoor spaces that amplify sounds. To offset the noise, the team developed algorithms that allow the robot to move more quietly.

Walker and his lab have examined similar scenarios that must take public perception into account.

“We like to think of Georgia Tech as going the extra mile,” Walker said. “Let’s not just make a robot, but a robot that’s going to fit into society.

“To have impact, the technologies we produce must be produced with society in mind. This is a holistic design that considers the users and all the people with whom the users interact.”

Taery Kim, a computer science Ph.D. student, began working on the concept of a robotic guide dog when she came to Georgia Tech in 2022. She and Ha, her advisor, have authored papers on building the robot’s navigation and safety components.

“When I started, I thought it would be as simple as giving the guide dog a command to take me to Starbucks or the grocery store, and it would just take me,” Kim said. “But the user must give waypoint directions — ‘go left here,’ ‘turn right,’ ‘go forward,’ ‘stop.’ Detailed commands must be delivered to the dog.”

While a real dog has naturally enhanced senses of hearing and smell that can’t be replicated, technology can provide interconnected safety features during an emergency. The researchers envision a camera system equipped with a 360-degree field of view, computer vision algorithms that detect obstacles or hazards, and voice recognition that recognizes calls for help. An SOS function could automatically call 911 at the owner’s request or if the owner is unresponsive.

Kim said the robot should also have explainability features to enhance communication with the owner. For example, if the robot suddenly stops or ignores an owner’s commands, it should tell the owner that it’s detecting a hazard in their path.

Manufacturing a robot at scale would initially be expensive, but the researchers believe the cost would eventually be offset because of its longevity. BVI individuals may only need to purchase one during their lifetime.

To introduce a prototype, the multidisciplinary research team recognizes that it needs to enlist experts from other fields to adequately address the various implications and research gaps inherent in the project.

Walker said the teams welcome additional partners who are keen to tackle challenges ranging from design and engineering to battery life to human-robot interaction.

Nathan Deen, Communications Officer

School of Interactive Computing

nathan.deen@cc.gatech.edu

Georgia Tech Researchers Named Finalists for Prestigious Blavatnik Science Awards

Sep 09, 2025 — Atlanta, GA

Headshots of Matthew McDowell and Ryan Lively

Two Georgia Tech researchers in the College of Engineering have been named finalists for the 2025 Blavatnik National Awards for Young Scientists. Their discoveries, which could create cleaner industrial processes and safer, more reliable batteries, have important potential impacts for daily life.

The Blavatnik Awards are presented by the Blavatnik Family Foundation and are administered by the New York Academy of Sciences. They honor the most promising early-career researchers in the U.S., across life sciences, chemistry, and physical sciences, and engineering. The awards are among the most prestigious and competitive in science.

This dual recognition underscores Georgia Tech’s growing national leadership in high-impact, interdisciplinary research.

Ryan Lively, Thomas C. DeLoach Jr. Endowed Professor in the School of Chemical and Biomolecular Engineering, is recognized in the Chemical Sciences category for pioneering scalable technologies that will reduce industrial carbon emissions and energy use. He develops new materials that can capture carbon and separate chemicals, using much less energy than conventional methods. His innovations could make industry cleaner and play a key role in addressing climate change.

Matthew McDowell, Carter N. Paden Jr. Distinguished Chair in the George W. Woodruff School of Mechanical Engineering holds a joint appointment in the School of Materials Science and Engineering. Recognized in the Physical Sciences and Engineering category for groundbreaking battery research, he and his team develop new materials to make batteries last longer and store more energy. He has discovered ways to visualize how battery materials change during use — insights that help improve the performance and safety of future energy technologies.

This year’s 18 finalists were selected from 310 nominees. On Oct. 7, 2025, three laureates will be announced at a gala at New York City’s American Museum of Natural History. Each laureate will receive $250,000, the largest unrestricted scientific prize for early-career researchers in the U.S.

Shelley Wunder-Smith

AI’s Ballooning Energy Consumption Puts Spotlight On Data Center Efficiency

Sep 03, 2025 —

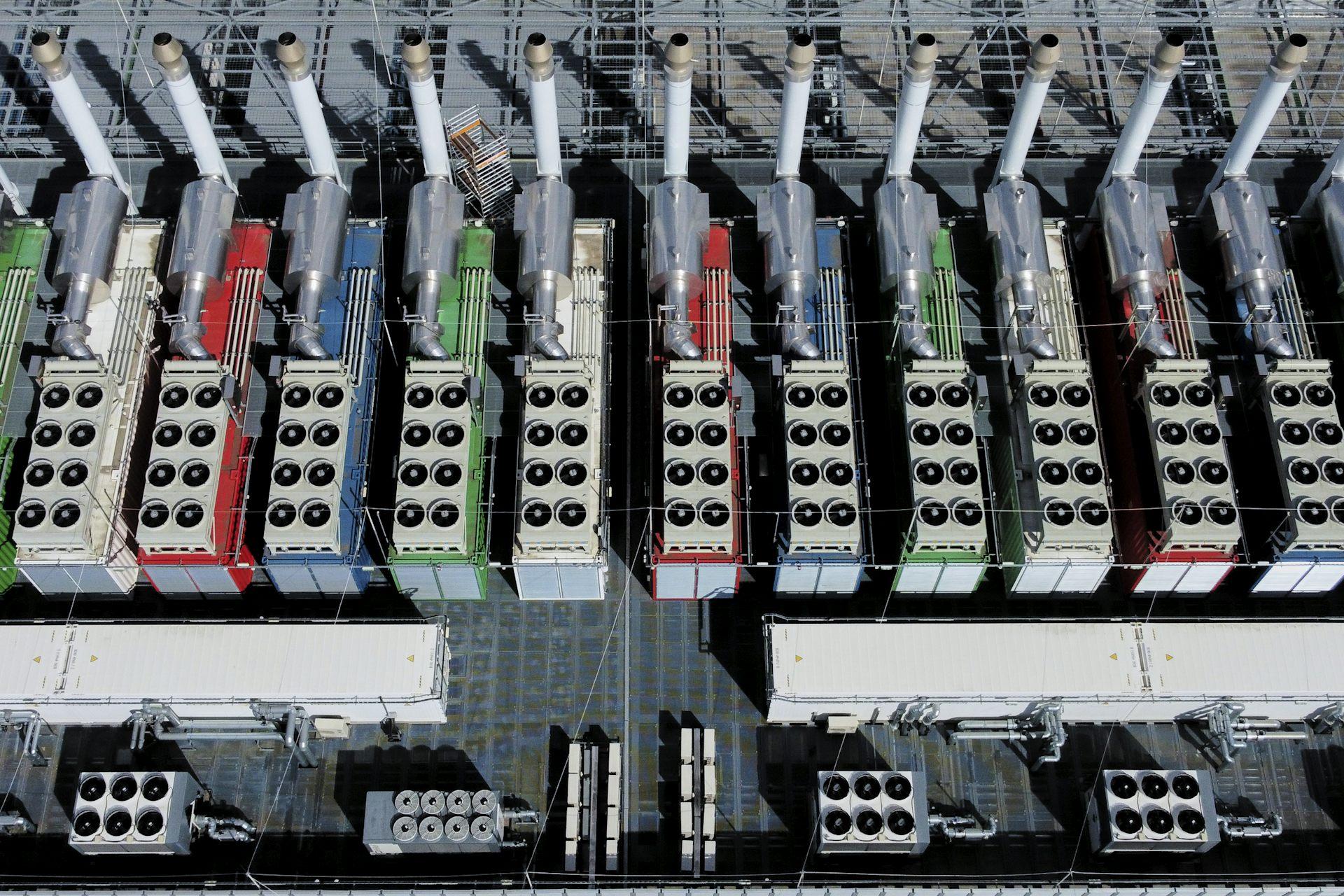

These ‘chillers’ on the roof of a data center in Germany, seen from above, work to cool the equipment inside the building. AP Photo/Michael Probst

Artificial intelligence is growing fast, and so are the number of computers that power it. Behind the scenes, this rapid growth is putting a huge strain on the data centers that run AI models. These facilities are using more energy than ever.

AI models are getting larger and more complex. Today’s most advanced systems have billions of parameters, the numerical values derived from training data, and run across thousands of computer chips. To keep up, companies have responded by adding more hardware, more chips, more memory and more powerful networks. This brute force approach has helped AI make big leaps, but it’s also created a new challenge: Data centers are becoming energy-hungry giants.

Some tech companies are responding by looking to power data centers on their own with fossil fuel and nuclear power plants. AI energy demand has also spurred efforts to make more efficient computer chips.

I’m a computer engineer and a professor at Georgia Tech who specializes in high-performance computing. I see another path to curbing AI’s energy appetite: Make data centers more resource aware and efficient.

Energy and Heat

Modern AI data centers can use as much electricity as a small city. And it’s not just the computing that eats up power. Memory and cooling systems are major contributors, too. As AI models grow, they need more storage and faster access to data, which generates more heat. Also, as the chips become more powerful, removing heat becomes a central challenge.

Data centers house thousands of interconnected computers. Alberto Ortega/Europa Press via Getty Images

Cooling isn’t just a technical detail; it’s a major part of the energy bill. Traditional cooling is done with specialized air conditioning systems that remove heat from server racks. New methods like liquid cooling are helping, but they also require careful planning and water management. Without smarter solutions, the energy requirements and costs of AI could become unsustainable.

Even with all this advanced equipment, many data centers aren’t running efficiently. That’s because different parts of the system don’t always talk to each other. For example, scheduling software might not know that a chip is overheating or that a network connection is clogged. As a result, some servers sit idle while others struggle to keep up. This lack of coordination can lead to wasted energy and underused resources.

A Smarter Way Forward

Addressing this challenge requires rethinking how to design and manage the systems that support AI. That means moving away from brute-force scaling and toward smarter, more specialized infrastructure.

Here are three key ideas:

Address variability in hardware. Not all chips are the same. Even within the same generation, chips vary in how fast they operate and how much heat they can tolerate, leading to heterogeneity in both performance and energy efficiency. Computer systems in data centers should recognize differences among chips in performance, heat tolerance and energy use, and adjust accordingly.

Adapt to changing conditions. AI workloads vary over time. For instance, thermal hotspots on chips can trigger the chips to slow down, fluctuating grid supply can cap the peak power that centers can draw, and bursts of data between chips can create congestion in the network that connects them. Systems should be designed to respond in real time to things like temperature, power availability and data traffic.

Break down silos. Engineers who design chips, software and data centers should work together. When these teams collaborate, they can find new ways to save energy and improve performance. To that end, my colleagues, students and I at Georgia Tech’s AI Makerspace, a high-performance AI data center, are exploring these challenges hands-on. We’re working across disciplines, from hardware to software to energy systems, to build and test AI systems that are efficient, scalable and sustainable.

Scaling With Intelligence

AI has the potential to transform science, medicine, education and more, but risks hitting limits on performance, energy and cost. The future of AI depends not only on better models, but also on better infrastructure.

To keep AI growing in a way that benefits society, I believe it’s important to shift from scaling by force to scaling with intelligence.![]()

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Author:

Divya Mahajan, assistant professor of Computer Engineering, Georgia Institute of Technology

Media Contact:

Shelley Wunder-Smith

shelley.wunder-smith@research.gatech.edu

How a Veteran Gained Invaluable Skills in AI Manufacturing at Georgia Tech

Aug 01, 2025 —

Air Force veteran Michael Trigger completed an internship at Georgia Tech's Advanced Manufacturing Pilot Facility.

Michael Trigger, an Air Force veteran in his late 50s, found an unexpected opportunity at Georgia Tech. After driving a truck for several years, he was ready to learn some new skills.

Trigger’s interest in artificial intelligence (AI) led him to a manufacturing course at the Veterans Education Career Transition Resource Center in Warner Robins, Georgia. With support from the Georgia Tech-led Georgia Artificial Intelligence in Manufacturing program (Georgia AIM), the center trains veterans in robotics using cutting-edge AI manufacturing technologies.

Digital Dashboard Helps Everyone Find Accessible Climate Solutions in Georgia

Sep 04, 2025 —

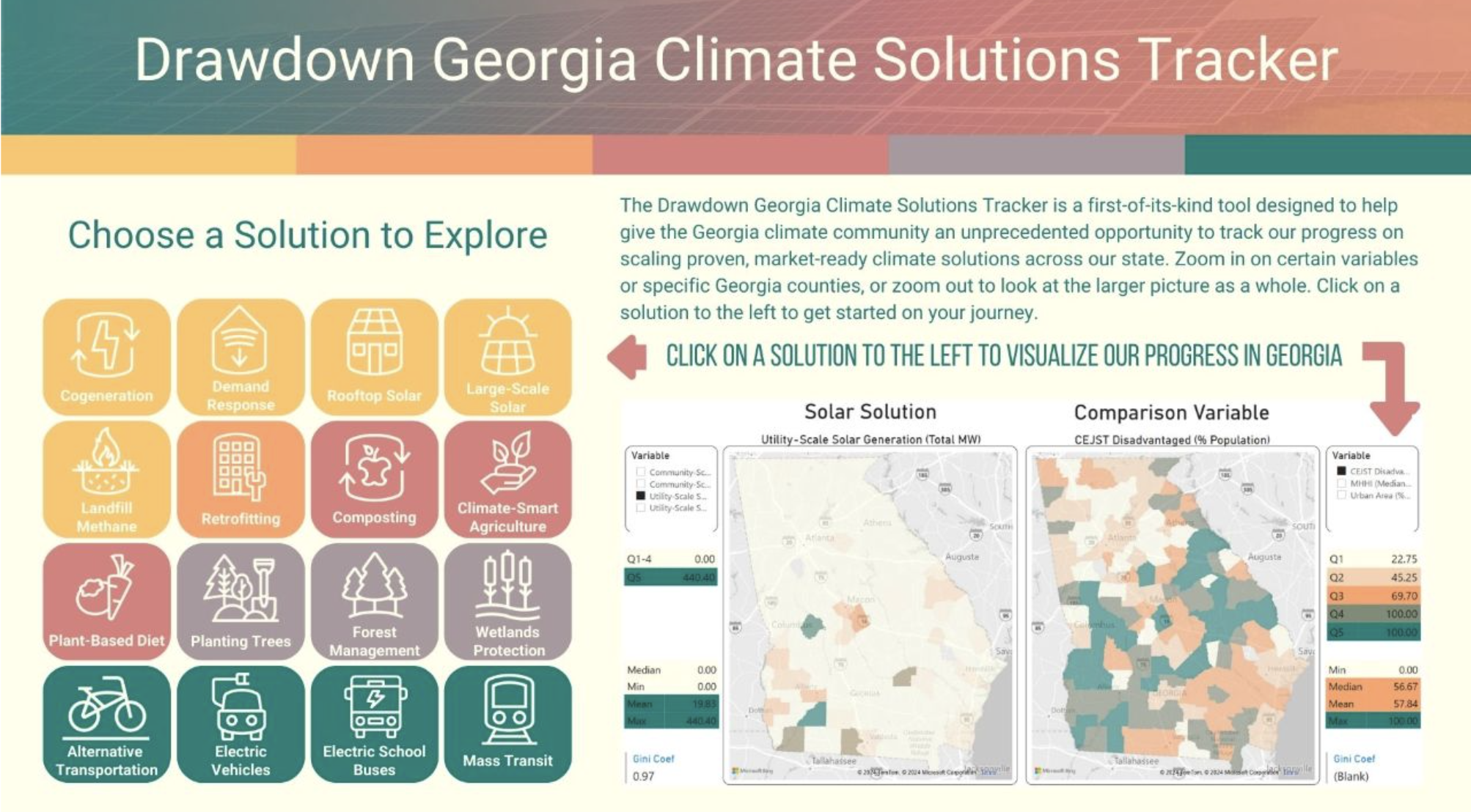

Electric vehicles. Rooftop solar. Cycling to work. Knowing where to start when reducing your personal carbon footprint can be daunting. But a new tool from Georgia Tech makes it easier for anyone to figure out how they can help address climate change.

The Drawdown Georgia Solutions Tracker is a digital dashboard that enables everyday Georgians to see how effective various technologies could be for each county. The tracker analyzes public data for 16 solutions — from planting trees to public transit — that can lower greenhouse gas emissions. The tracker is equally essential for policymakers and business leaders, enabling them to identify opportunities to propose legislation or adjust operations to reduce carbon emissions.

To use the tracker, viewers click on a solution to see its impact. Then, they specify a particular county, and the data is tailored to the most relevant metric. For example, if someone picks “plant-based diet” as a solution, they can see how many vegan restaurants are already in their county. The tracker also contrasts the climate solution with a relevant area that might benefit if the solution is implemented. For the plant-based example, the tracker compares it to urban density.

This tracker is one of the many initiatives of Drawdown Georgia, one of the Ray C. Anderson Foundation’s key funding initiatives based on research conducted by Georgia Tech, Georgia State University, the University of Georgia, and Emory University. Drawdown Georgia's goal is to reduce Georgia’s carbon impact by 57% by 2030 and to accelerate Georgia’s progress toward net-zero greenhouse emissions.

Drawdown Georgia also developed a carbon emissions tracker that shows carbon emission levels by county. The dashboard was a success, but the Drawdown Georgia team wanted to create a more proactive tool. The Solutions Tracker was designed so that anyone could make smalldaily changes to improve the climate — not just track it.

“We began the Drawdown Georgia project with the goal of cutting state pollution significantly,” said Marilyn Brown, Regents' Professor and the Brook Byers Professor of Sustainable Systems in the Jimmy and Rosalynn Carter School of Public Policy. "To get Georgians involved, we decided to focus on local and regional opportunities to reduce emissions.”

Drawdown Data

The data combines federal and state sources from the U.S. Energy Information Administration, the National Renewable Energy Laboratory, and the Department of Agriculture. Some solutions may seem obvious, like planting trees, but others are more niche. For example, decomposing trash often produces methane gas, which means that landfills contribute to greenhouse gas emissions — important information for policymakers to consider when developing carbon reduction strategies.

The researchers hope everyone will use the tracker. Politicians and policymakers can find new ideas for legislation or the adoption of these solutions. Business leaders can find opportunities to hit their decarbonization goals. Georgians can use the tracker to figure out which solutions are most sustainable for their lives. Even scientists can learn which methods to home in on for their research. Since the tracker is available via Creative Commons, anyone can use the data to build their own tools or models.

The tracker is already having a real-world impact. Brown and the Drawdown Georgia team have collaborated with the state of Georgia and the 29-county metro Atlanta area on their carbon action plans. They’ve also partnered with 75 businesses on carbon action plans and other solutions through the Drawdown Georgia Business Compact, managed by the Ray C. Anderson Center for Sustainable Business in the Scheller College of Business. As these stakeholders ask questions about different climate solution impacts, the team has expanded the tracker accordingly. They’ve also recently redesigned the user interface to make it even more accessible for everyday users.

From improved public health to business opportunities, the state requires reduced greenhouse gases, and Georgia Tech is not only tracking emissions but helping to fix the problem, too.

Tess Malone, Senior Research Writer/Editor

tess.malone@gatech.edu

The Algorithm Will See You Now — But Only If You’re the Perfect Patient

Sep 02, 2025 —

An illustration representing a doctor working with an AI-powered health device.

In the morning, before you even open your eyes, your wearable device has already checked your vitals. By the time you brush your teeth, it has scanned your sleep patterns, flagged a slight irregularity, and adjusted your health plan. As you take your first sip of coffee, it’s already predicted your risks for the week ahead.

Georgia Tech researchers warn that this version of AI healthcare imagines a patient who is "affluent, able-bodied, tech-savvy, and always available." Those who don’t fit that mold, they argue, risk becoming invisible in the healthcare system.

The Ideal Future

In their study, published in the Proceedings of the ACM Conference on Human Factors in Computing Systems, the researchers analyzed 21 AI-driven health tools, ranging from fertility apps and wearable devices to diagnostic platforms and chatbots. They used sociological theory to understand the vision of the future these tools promote — and the patients they leave out.

“These systems envision care that is seamless, automatic, and always on,” said Catherine Wieczorek, a Ph.D. student in human-centered computing in the School of Interactive Computing and lead author of the study. “But they also flatten the messy realities of illness, disability, and socioeconomic complexity.”

Four Futures, One Narrow Lens

During their analysis, the researchers discovered four recurring narratives in AI-powered healthcare:

- Care that never sleeps. Devices track your heart rate, glucose levels, and fertility signals — all in real time. You are always being watched, because that’s framed as “care.”

- Efficiency as empathy. AI is faster, more objective, and more accurate. Unlike humans, it doesn’t get tired or biased. This pitch downplays the value of human judgment and connection.

- Prevention as perfection. A world where illness is avoided through early detection if you have the right sensors, the right app, and the right lifestyle.

- The optimized body. You’re not just healthy, you’re high-performing. The tech isn’t just treating you; it’s upgrading you.

“It’s like healthcare is becoming a productivity tool,” Wieczorek said. “You’re not just a patient anymore. You’re a project.”

Not Just a Tool, But a Teammate

This study also points to a critical transformation in which AI is no longer just a diagnostic tool; it’s a decision-maker. Described by the researchers as “both an agent and a gatekeeper,” AI now plays an active role in how care is delivered.

In some cases, AI systems are even named and personified, like Chloe, an IVF decision-support tool. “Chloe equips clinicians with the power of AI to work better and faster,” its promotional materials state. By framing AI this way — as a collaborator rather than just software — these systems subtly redefine who, or what, gets to be treated.

“When you give AI names, personalities, or decision-making roles, you’re doing more than programming. You’re shifting accountability and agency. That has consequences,” said Shaowen Bardzell, chair of Georgia Tech’s School of Interactive Computing and co-author of the study.

“It blurs the boundaries,” Wieczorek noted. “When AI takes on these roles, it’s reshaping how decisions are made and who holds authority in care.”

Calculated Care

Many AI tools promise early detection, hyper-efficiency, and optimized outcomes. But the study found that these systems risk sidelining patients with chronic illness, disabilities, or complex medical needs — the very people who rely most on healthcare.

“These technologies are selling worldviews,” Wieczorek explained. “They’re quietly defining who healthcare is for, and who it isn’t.”

By prioritizing predictive algorithms and automation, AI can strip away the context and humanity that real-world care requires.

“Algorithms don’t see nuance. It’s difficult for a model to understand how a patient might be juggling multiple diagnoses or understand what it means to manage illness, while also navigating other important concerns like financial insecurity or caregiving. They are predetermined inputs and outputs,” Wieczorek said. “While these systems claim to streamline care, they are also encoding assumptions about who matters and how care should work. And when those assumptions go unchallenged, the most vulnerable patients are often the ones left out.”

AI for ALL

The researchers argue that future AI systems must be developed in collaboration with those who don’t fit in the vision of a “perfect patient.”

“Innovation without ethics risks reinforcing existing inequalities. It’s about better tech and better outcomes for real people,” Bardzell said. “We’re not anti-innovation. But technological progress isn’t just about what we can do. It’s about what we should do — and for whom.”

Wieczorek and Bardzell aren’t trying to stop AI from entering healthcare. They’re asking AI developers to understand who they’re really serving.

Funding:

This work was supported by the National Science Foundation (Grant #2418059).

Michelle Azriel, Sr. Writer-Editor

mazriel3@gatech.edu

Georgia Tech’s Jill Watson Outperforms ChatGPT in Real Classrooms

Sep 02, 2025 —

A new version of Georgia Tech’s virtual teaching assistant, Jill Watson, has demonstrated that artificial intelligence can significantly improve the online classroom experience. Developed by the Design Intelligence Laboratory (DILab) and the U.S. National Science Foundation AI Institute for Adult Learning and Online Education (AI-ALOE), the latest version of Jill Watson integrates OpenAI’s ChatGPT and is outperforming OpenAI’s own assistant in real-world educational settings.

Jill Watson not only answers student questions with high accuracy. It also improves teaching presence and correlates with better academic performance. Researchers believe this is the first documented instance of a chatbot enhancing teaching presence in online learning for adult students.

How Jill Watson Shaped Intelligent Teaching Assistants

First introduced in 2016 using IBM’s Watson platform, Jill Watson was the first AI-powered teaching assistant deployed in real classes. It began by responding to student questions on discussion forums like Piazza using course syllabi and a curated knowledge base of past Q&As. Widely covered by major media outlets including The Chronicle of Higher Education, The Wall Street Journal, and The New York Times, the original Jill pioneered new territory in AI-supported learning.

Subsequent iterations addressed early biases in the training data and transitioned to more flexible platforms like Google’s BERT in 2019, allowing Jill to work across learning management systems such as EdStem and Canvas. With the rise of generative AI, the latest version now uses ChatGPT to engage in extended, context-rich dialogue with students using information drawn directly from courseware, textbooks, video transcripts, and more.

Future of Personalized, AI-Powered Learning

Designed around the Community of Inquiry (CoI) framework, Jill Watson aims to enhance “teaching presence,” one of three key factors in effective online learning, alongside cognitive and social presence. Teaching presence includes both the design of course materials and facilitation of instruction. Jill supports this by providing accurate, personalized answers while reinforcing the structure and goals of the course.

The system architecture includes a preprocessed knowledge base, a MongoDB-powered memory for storing conversation history, and a pipeline that classifies questions, retrieves contextually relevant content, and moderates responses. Jill is built to avoid generating harmful content and only responds when sufficient verified course material is available.

Field-Tested in Georgia and Beyond

In Fall 2023, Jill Watson was deployed in Georgia Tech’s Online Master of Science in Computer Science (OMSCS) artificial intelligence course, serving over 600 students, and in an English course at Wiregrass Georgia Technical College (WGTC), part of the Technical College System of Georgia (TCSG).

A controlled A/B experiment in the OMSCS course allowed researchers to compare outcomes between students with and without access to Jill Watson, even though all students could use ChatGPT. The findings are striking:

- Jill Watson’s accuracy on synthetic test sets ranged from 75% to 97%, depending on the content source. It consistently outperformed OpenAI’s Assistant, which scored around 30%.

- Students with access to Jill Watson showed stronger perceptions of teaching presence, particularly in course design and organization, as well as higher social presence.

- Academic performance also improved slightly: students with Jill saw more A grades (66% vs. 62%) and fewer C grades (3% vs. 7%).

A Smarter, Safer Chatbot

While Jill Watson uses ChatGPT for natural language generation, it restricts outputs to validated course material and verifies each response using textual entailment. According to a study by Taneja et al. (2024), Jill not only delivers more accurate answers than OpenAI’s Assistant but also avoids producing confusing or harmful content at significantly lower rates.

Compared to OpenAI’s Assistant, Jill Watson (ChatGPT) not only achieves higher accuracy but also produces confusing or harmful content at significantly lower rates. Jill Watson answers correctly 78.7% of the time, with only 2.7% of its errors categorized as harmful and 54.0% as confusing. In contrast, OpenAI’s Assistant demonstrates a much lower accuracy of 30.7%, with harmful failures occurring 14.4% of the time and confusing failures rising to 69.2%. Additionally, Jill Watson has a lower retrieval failure rate of 43.2%, compared to 68.3% for the OpenAI Assistant.

What’s Next for Jill

The team plans to expand testing across introductory computing courses at Georgia Tech and technical colleges. They also aim to explore Jill Watson’s potential to improve cognitive presence, particularly critical thinking and concept application. Although quantitative results for cognitive presence are still inconclusive, anecdotal feedback from students has been positive. One OMSCS student wrote:

“The Jill Watson upgrade is a leap forward. With persistent prompting I managed to coax it from explicit knowledge to tacit knowledge. Kudos to the team!”

The researchers also expect Jill to reduce instructional workload by handling routine questions and enabling more focus on complex student needs.

Additionally, AI-ALOE is collaborating with the publishing company John Wiley & Sons, Inc., to develop a Jill Watson virtual teaching assistant for one of their courses, with the instructor and university chosen by Wiley. If successful, this initiative could potentially scale to hundreds or even thousands of classes across the country and around the world, transforming the way students interact with course content and receive support.

A Georgia Tech-Led Collaboration

The Jill Watson project is supported by Georgia Tech, the US National Science Foundation’s AI-ALOE Institute (Grants #2112523 and #2247790), and the Bill & Melinda Gates Foundation.

Core team members are Saptrishi Basu, Jihou Chen, Jake Finnegan, Isaac Lo, JunSoo Park, Ahamad Shapiro and Karan Taneja, under the direction of professor Ashok Goel and Sandeep Kakar. The team works under Beyond Question LLC, an AI-based educational technology startup.

Breon Martin