Boosting Research with LLMs Workshop

Apr 02, 2025 — Atlanta

One half of the room at the Boosting Research with LLMs Workshop held April 1, 2025

More than 80 participants attended a dynamic half-day workshop exploring how large language models (LLMs) can accelerate scientific discovery across disciplines. The workshop, Boosting Research with LLMs, was held April 1 in the Technology Square Research Building’s ballroom and was co-sponsored by the Institute for People and Technology (IPaT) and the Institute for Data Engineering and Science (IDEaS).

The workshop presented three expert-led panels to uncover practical applications of LLMs for engineers, natural scientists, computer scientists, and social scientists-transforming the way they analyze data, generate insights, and advance research.

The workshop was designed for beginners and required no prior experience with LLMs, making it a unique opportunity to explore cutting-edge Al tools that can enhance research capabilities. The event was open to Georgia Tech faculty, graduate students, researchers and staff.

"The LLM workshop drew in participants with a wide range of interests,” said David Sherrill, Regents’ Professor in the School of Chemistry and Biochemistry, and interim executive director of IDEaS. “It was exciting to hear how LLMs are being used by students and faculty to accelerate their research in science, engineering, and social sciences."

View the agenda and the thirteen presenters >>

LLM Workshop event (image).

CREATE-X Demo Day 2025

Join us for CREATE-X Demo Day 2025! On August 28, meet the 12th batch of Georgia Tech Startups using the latest technology to solve big problems and launch successful ventures. Experience the newest batch of 200+ founders at Georgia Tech during CREATE-X’s 2025 Demo Day. This year, we’re debuting the 12th cohort of founders, adding 100+ startups to our over 650. Collectively, our startups have generated a total portfolio evaluation of more than $2.4B, with the last cohort already valued over $80M.

DolphinGemma: How Google AI is helping decode dolphin communication

Apr 14, 2025 — Atlanta

Picured: Thad Starner, Professor, School of Interactive Computing wearing a device intended to communicate with dolphins.

For decades, understanding the clicks, whistles and burst pulses of dolphins has been a scientific frontier. What if we could not only listen to dolphins, but also understand the patterns of their complex communication well enough to generate realistic responses?

Today, on National Dolphin Day, Google, in collaboration with researchers at Georgia Tech and the field research of the Wild Dolphin Project (WDP), is announcing progress on DolphinGemma: a foundational AI model trained to learn the structure of dolphin vocalizations and generate novel dolphin-like sound sequences. This approach in the quest for interspecies communication pushes the boundaries of AI and our potential connection with the marine world.

Read the full story from Google here >>

This story features three Georgia Tech researchers involved with the wild dolphin project.

New Wearable Device Monitors Skin Health in Real Time

Apr 14, 2025 —

The wireless device measures only two centimeters in length and one-and-a-half centimeters in width, and is the first of its kind to continuously monitor the skin's exchange of vapors with the environment.

From sun damage and pollution to cuts and infections, our skin protects us from a lot. But it isn’t impenetrable.

“We tend to think of our skin as being this impermeable barrier that’s just enclosing our body,” said Matthew Flavin, assistant professor in the School of Electrical and Computer Engineering. “Our skin is constantly in flux with the gases that are in our environment and our atmosphere.”

Led by the Georgia Institute of Technology, Northwestern University, and the Korea Institute of Science and Technology (KIST), researchers have developed a novel wearable device that can monitor the flux of vapors through the skin, offering new insights into skin health and wound healing. This technology, detailed in a recent Nature publication, represents a significant advancement in the field of wearable bioelectronics.

“You could think of this being used where a Band-Aid is being used,” said Flavin, one of the lead authors of the study. The compact, wireless device is the first wearable technology able to continuously and precisely measure water vapor, volatile organic compounds, and carbon dioxide fluxes in the skin in real time. Because increases in these factors are associated with infection and delayed healing, Flavin notes that this kind of wireless monitoring “could give clinicians a new tool to understand the properties of the skin.”

The Measurement Barrier

Our skin is our first line of defense against environmental hazards. Measuring how effectively it protects us from harmful pollutants or infections has been a significant challenge, especially over extended periods.

“The vapors coming from your skin are in very, very low concentration,” explained Flavin. “If we just put a sensor next to your skin, it would be almost impossible to control that measurement.”

The new device features a small chamber that condenses and measures vapors from the skin using specialized sensors hovering above the skin. A low-energy, bi-stable mechanism periodically refreshes the air in the chamber, allowing for continuous measurements communicated to a smartphone or tablet through Bluetooth.

“There are other devices that can measure certain parts of what we're talking about here,” said Flavin, “but they are not feasible for a wearable device, can't do this continuously, and are not able to get all the information that our device can get.”

Scratching the Surface

By tracking the skin's water vapor flux, also known as transepidermal water loss, the device can assess skin barrier function and wound healing. This capability is particularly valuable for tracking the healing process in diabetic patients, who often have sensory issues that complicate wound monitoring. “What you see in diabetes is that even after the wound looks like it's healed, there's still a persistent impairment of that barrier,” said Flavin. This new non-invasive device tracks those properties.

“There are many areas where people don't have great access to healthcare, and there aren’t doctors monitoring wound healing processes,” Flavin added. “Something that can be used to monitor that remotely could make care more accessible to people with these conditions.”

The device’s wearable nature also makes it ideal for studying the long-term effects of exposure to environmental hazards like wildfires or chemical fumes on skin function and overall health.

Though the applications in health are numerous, the research team is continuing to explore different ways to use the device. “This measurement modality is very new and we're still learning what we can do with it,” saidJaeho Shin, a senior researcher at KIST and a co-leader of the study. “It's a new way of measuring what's inside the body.”

“This is a great example of the kind of technology that can emerge from research at the interface between engineering science and medical practice,” said John Rogers, a materials science professor at Northwestern and another co-leader of the study. “The capabilities provided by this device will not only improve patient care, but they will also lead to improved understanding of the skin, the skin microbiome, the processes of wound healing, and many others.”

As a new faculty member and a member of Georgia Tech’s Neuro Next Initiative, a burgeoning interdisciplinary research hub for neuroscience, neurotechnology, and society, Flavin attributes the success of this research to its interdisciplinary nature.

“A broad challenge we have in these fields of research is that they integrate a lot of different areas. One of the reasons I came to Georgia Tech is because it's a place where you have access to all those different areas of expertise.”

DOI: https://doi.org/10.1038/s41586-025-08825-2

Funding: Querrey-Simpson Institute for Bioelectronics and the Center for Advanced Regenerative Engineering (CARE), Northwestern University; National Research Foundation of Korea; National Institutes of Health (NIH), National Institute of Diabetes and Digestive and Kidney Diseases, National Institutes of Biomedical Imaging and Bioengineering.

Writer: Audra Davidson

Research Communications Program Manager

Neuro Next Initiative

Media contact: Angela Barajas Prendiville

Director

Institute Media Relations

2024 Foley Scholar Award Winners - Research Presentations

April 17, 2025

12:00 p.m. Lunch; 12:30 p.m. talk starts

Location: TSRB 1st Floor Ballroom, 85 Fifth St NW, Atlanta, GA 30308

2024 Foley Scholar Award Winners (MS) Momin Siddiqui and (PhDs) Vanessa Oguamanam, Charles Ramey & Jiawei Zhou to present their research.

Code Switching in the Digital World

Apr 09, 2025 — Atlanta

Technology has transformed how we communicate. Research from the Ivan Allen College of Liberal Arts shows that code-switching — the practice of switching between languages, dialects, accents, tones, or cultures in conversation — is changing with it.

Faculty members in the School of Modern Languages and the Sam Nunn School of International Affairs have published three studies examining how language and cultural code-switching have adapted to the digital age, revealing speakers’ fluency, promoting self-expression, and making messaging more effective. Their research is relevant, as the population of bilingual and bicultural people increases in the United States.

By better understanding code-switching in digital spaces, “we can reveal insights into language dynamics and cultural identity among young bilingual speakers,” says Hongchen Wu, an assistant professor in the School of Modern Languages. “Annotated code-switching datasets are also a valuable resource for training and testing language technologies tailored to bilingual speakers — allowing, for example, an AI-assistant that can understand their code-switching with no struggles.”

14th Annual Southeastern Pediatric Research Conference

The 14th Annual Southeastern Pediatric Research Conference will showcase the breadth of pediatric research conducted throughout the southeastern United States. This year's conference will feature groundbreaking research from Emory University, Children's Healthcare of Atlanta, Georgia Tech, and Morehouse School of Medicine and will bring together basic scientists, clinical researchers, pediatricians, and healthcare providers to advance the integration of innovative research into clinical practice.

Kait Morano Shares Insights on Disaster Resilience With Georgia Lawmakers

Mar 28, 2025 — Atlanta

Kait Morano

Kait Morano, a community resilience expert from Georgia Tech, presented critical insights to the Georgia State House of Representatives study committee on disaster mitigation and resilience. Her testimony highlighted the importance of strong partnerships, evidence-based decision-making, and community-driven planning to better prepare for and withstand the impacts from natural disasters. Morano serves as the resilience planning director for CEAR Hub, which works closely with Georgia’s coastal communities. She is also a research scientist with Georga Tech’s Institute for People and Technology.

Morano emphasized strategies to address the unique challenges faced by vulnerable coastal communities, underscoring the need for investments in resilience and capacity-building initiatives before a disaster strikes. Her contributions were reflected in the committee's final report, which includes recommendations for creating a dedicated statewide office of resilience, upgrading 911 systems, and bolstering building codes for certain types of facilities. These efforts aim to mitigate the impacts of increasingly frequent and severe disasters on Georgia's communities.

The committee's findings and Morano's testimony underline the vital role of research, collaboration, and proactive planning in building a safer and more resilient Georgia.

The study committee’s final report is available here: https://www.legis.ga.gov/api/document/docs/default-source/house-study-committee-document-library-page/disaster-mitigation-and-resilience/disaster-mitigation-and-resilience-study-committee-final-report.pdf?sfvrsn=c21b5178_2.

New Wearable Brain-Computer Interface

Apr 07, 2025 — Atlanta, GA

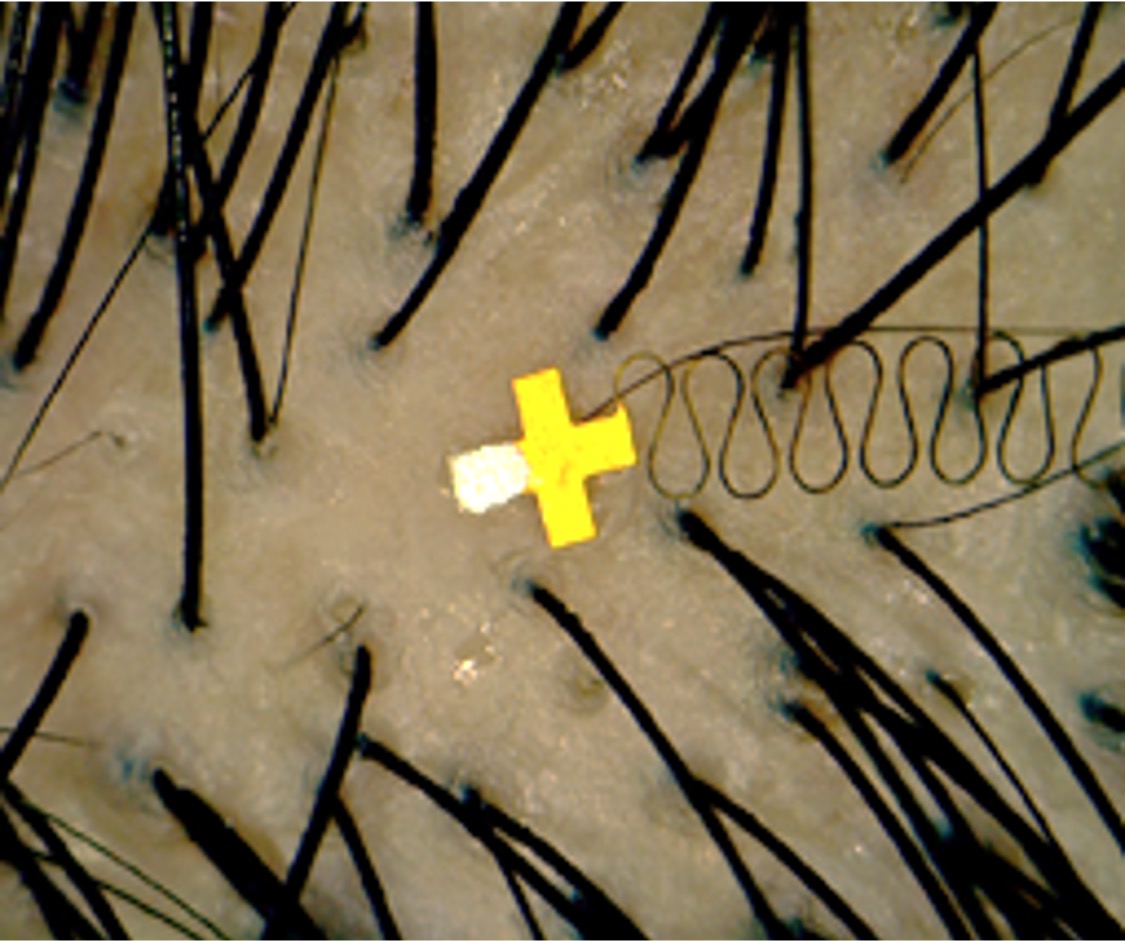

A micro-scale brain sensor on a finger. Credit: W. Hong Yeo.

Micro-brain sensors placed between hair strands overcome traditional brain sensor limitations.

Georgia Tech researchers have developed an almost imperceptible microstructure brain sensor to be inserted into the minuscule spaces between hair follicles and slightly under the skin. The sensor offers high-fidelity signals and makes the continuous use of brain-computer interfaces (BCI) in everyday life possible.

BCIs create a direct communication pathway between the brain's electrical activity and external devices such as electroencephalography devices, computers, robotic limbs, and other brain monitoring devices. Brain signals are commonly captured non-invasively with electrodes mounted on the surface of the human scalp using conductive electrode gel for optimum impedance and data quality. More invasive signal capture methods such as brain implants are possible, but this research seeks to create sensors that are both easily placed and reliably manufactured.

Hong Yeo, the Harris Saunders Jr. Professor in the George W. Woodruff School of Mechanical Engineering, combined the latest microneedle technology with his deep expertise in wearable sensor technology that may allow stable brain signal detection over long periods and easy insertion of a new painless, wearable microneedle BCI wireless sensor that fits between hair follicles. The skin placement and extremely small size of this new wireless brain interface could offer a variety of benefits over traditional gel or dry electrodes.

“I started this research because my main goal is to develop new sensor technology to support healthcare and I had previous experience with brain-computer interfaces and flexible scalp electronics,” said Yeo, who is also a faculty member in Georgia Tech’s Institute for People and Technology. “I knew we needed better BCI sensor technology and discovered that if we can slightly penetrate the skin and avoid hair by miniaturizing the sensor, we can dramatically increase the signal quality by getting closer to the source of the signals and reduce unwanted noise.”

Today’s BCI systems consist of bulky electronics and rigid sensors that prevent the interfaces from being useful while the user is in motion during regular activities. Yeo and colleagues constructed a micro-scale sensor for neural signal capture that can be easily worn during daily activities, unlocking new potential for BCI devices. His technology uses conductive polymer microneedles to capture electrical signals and conveys those signals along flexible polyimide/copper wires — all of which are packaged in a space of less than 1 millimeter.

A study of six people using the device to control an augmented reality (AR) video call found that high-fidelity neural signal capture persisted for up to 12 hours with very low electrical resistance at the contact between skin and sensor. Participants could stand, walk, and run for most of the daytime hours while the brain-computer interface successfully recorded and classified neural signals indicating which visual stimulus the user focused on with 96.4% accuracy. During the testing, participants could look up phone contacts and initiate and accept AR video calls hands-free as this new micro-sized brain sensor was picking up visual stimuli — all the while giving the user complete freedom of movement.

According to Yeo, the results suggest that this wearable BCI system may allow for practical and continuous interface activity, potentially leading to everyday use of machine-human integrative technology.

“I firmly believe in the power of collaboration, as many of today’s challenges are too complex for any one individual to solve,” said Yeo. “Therefore, I would like to express my gratitude to all the researchers in my group and the amazing collaborators who made this work possible. I will continue collaborating with the team to enhance BCI technology for rehabilitation and prosthetics.”

Note: Hodam Kim (postdoctoral research fellow), Ju Hyeon Kim (visiting Ph.D. student from Inha University – South Korea), and Yoon Jae Lee (Ph.D. student) also played a major role in developing this technology.

Funding: National Science Foundation NRT (Research Traineeship program in the Sustainable Development of Smart Medical Devices), WISH Center (Institute for Matter and Systems), and partial research support from several South Korean programs and grants.

PNAS article publication (April 7, 2025, Vol. 122, No. 15): https://www.pnas.org/doi/10.1073/pnas.2419304122

A micro-scale brain sensor placed between hair follicles. Credit: W. Hong Yeo.

Walter Rich, Research Communications

Dissertation Lightning Talks

Six School of Interactive Computing PhD students will present 2025 Dissertation Lighting Talks. Presenters will discuss what their dissertation is about, methodology and what they are doing now, and what has been learned so far. The event will include talks by PhD candidates Adam Coscia, Qiao Zhang, Sichen Jin, Yao Dou, Jonathan Zheng, and Jin Yu.