Youth Advocacy for Resilience to Disasters: Empowering Youth to Be Advocates for Change

Speaker: Allen Hyde, Assistant Professor, School of History & Sociology in the Ivan Allen College of Liberal Arts, Georgia Tech

David Sherrill to Serve as Interim Director of the Institute for Data Engineering and Science

Jan 06, 2025 —

Effective January 1st, David Sherrill will serve as interim executive director of the Georgia Tech Institute for Data Engineering and Science (IDEaS). Sherrill is a Regents' Professor in the School of Chemistry and Biochemistry with a joint appointment in the College of Computing. Sherrill has served as associate director for IDEaS since its founding in 2016.

"David Sherrill's leadership role in IDEaS as associate director, together with his interdisciplinary background in chemistry and computer science, makes him the right person to support this transition as interim executive director," said Julia Kubanek, professor and vice president for interdisciplinary research at Georgia Tech.

Sherrill succeeds Srinivas Aluru who will be taking a new position as Senior Associate Dean in the College of Computing. Aluru, a Regents' Professor in the School of Computational Science and Engineering, co-founded IDEaS and served as its co-executive director (2016-2019) and then as executive director (2019-date), spanning eight and a half years. Under his leadership IDEaS grew to more than 200 affiliate faculty spanning all colleges, encompassing multiple state, federal, and industry funded centers. Notable among these is the South Big Data Hub, catalyzing the Southern data science community to collectively accelerate scientific discovery and innovation, spur economic development in the region, broaden participation and diversity in data science, and the CloudHub, a Microsoft funded center that provides research funding and cloud resources for innovative applications in Generative Artificial Intelligence. More recently, Aluru established the Center for Artificial Intelligence in Science and Engineering (ARTISAN), and expanded the Institute’s research staff to provide needed cyberinfrastructure, software resources, and expertise to support faculty projects with large data sets and AI-driven discovery. "I've had the pleasure of serving as Associate Director of IDEaS since it was founded by Srinivas Aluru and Dana Randall, and I'm excited to step into this interim role.” said Sherrill. “IDEaS has an important mission to serve the many faculty doing interdisciplinary research involving data science and high performance computing."

Sherrill’s research group focuses on the development of ab initio electronic structure theory and its application to problems of broad chemical interest, including the influence of non-covalent interactions in drug binding, biomolecular structure, organic crystals, and organocatalytic transition states. The group seeks to apply the most accurate quantum models possible for a given problem and specializes in generating high-quality datasets for testing new methods or machine-learning purposes.

Sherrill earned a B.S. in chemistry from MIT in 1992 and a Ph.D. in chemistry from the University of Georgia in 1996. From 1996-1999 Sherril was an NSF Postdoctoral Fellow, working under M. Head-Gordon, at the University of California, Berkeley.

Sherrill is a Fellow of the American Association for the Advancement of Science (AAAS), the American Chemical Society, and the American Physical Society, and he has been Associate Editor of the Journal of Chemical Physics since 2009. Sherrill has received a Camille and Henry Dreyfus New Faculty Award, the International Journal of Quantum Chemistry Young Investigator Award, an NSF CAREER Award, and Georgia Tech's W. Howard Ector Outstanding Teacher Award. In 2023, he received the Herty Medal from the Georgia Section of the American Chemical Society, and in 2024, he was elected to the International Academy of Quantum Molecular Science.

--Christa M. Ernst

Christa M. Ernst [christa.ernst@research.gatech.edu],

Research Communications Program Manager,

Topic Expertise: Robotics | Data Sciences| Semiconductor Design & Fab

Gregory Sawicki to Serve as Interim Director of the Institute for Robotics and Intelligent Machines

Jan 06, 2025 —

Gregory Sawicki to Serve as Interim Director of the Institute for Robotics and Intelligent Machines

Effective January 1st, Gregory Sawicki will serve as interim executive director of the Georgia Tech Institute for Robotics and Intelligent Machines (IRIM). Sawicki is a professor and the Joseph Anderer Faculty Fellow in the George W. Woodruff School of Mechanical Engineering with a joint appointment in the School of Biological Sciences.

“Professor Greg Sawicki will make a great interim executive director of IRIM. He brings experience with robotics and collaborative research to this role,” said Julia Kubanek, professor and vice president for interdisciplinary research at Georgia Tech. “He'll be a strong partner to faculty, students, and the EVPR team as we explore the future of IRIM and robotics over the next several months."

Sawicki succeeds Seth Hutchinson who will be taking a new position at Northeastern University in Boston. Hutchinson, professor and KUKA Chair for Robotics in Georgia Tech’s College of Computing, has served as executive director of IRIM for five years. During Hutchinson’s tenure as executive director, IRIM expanded its industry outreach activities, developed more consistent communications, and grew its faculty pool at Georgia Tech to include a diverse cohort from across the Colleges of Engineering and Computing and the Georgia Tech Research Institute.

"I am extremely excited to step into this leadership role for IRIM, maintain our research excellence in the foundational areas of robotics, and proactively leverage opportunities to grow across campus and beyond in novel, creative interdisciplinary directions,” said Sawicki. “This will involve new initiatives to incentivize connections with GTRI and other IRI's on campus, to build new industry partnerships, and continue to strengthen the M.S./Ph.D. program in Robotics by engaging with Schools beyond those with a traditional footprint in robotics education and research.”

Sawicki directs the Human Physiology of Wearable Robotics (PoWeR) Lab where he and his group seek to discover physiological principles underpinning locomotion performance and apply them to develop lower-limb robotic devices capable of improving both healthy and impaired human locomotion. By focusing on the human side of the human-machine interface, his team has begun to create a roadmap for the design of lower-limb robotic exoskeletons that are truly symbiotic – that is, wearable devices that work seamlessly in concert with the underlying physiological systems to facilitate the emergence of augmented human locomotion performance.

Sawicki earned a B.S. in mechanical and aerospace engineering from Cornell University in 1999, an M.S. in mechanical and aeronautical engineering from the University of California - Davis in 2001, and a Ph.D. in neuromechanics at the University of Michigan-Ann Arbor in 2007. Sawicki completed his postdoctoral studies in integrative biology at Brown University in 2009.

Sawicki has been recognized for his interdisciplinary research and teaching, recently receiving a $2.6 million Research Project Grant from the National Institutes of Health (NIH) to study optimization and artificial intelligence to personalize exoskeleton assistance for individuals with symptoms resulting from stroke. * Sawicki was also selected as a 2021 George W. Woodruff School Academic Leadership Fellow, and the 2022 College of Sciences Student Recognition of Excellence in Teaching and the 2023 American Society of Biomechanics Founders’ Award for excellence in research and mentoring. Sawicki has also been featured as an expert voice on exoskeletons and human neuromechanics in numerous print and television news releases.

--Christa M. Ernst

*Joint Award with Aaron Young, Assistant Professor in the Woodruff School of Mechanical Engineering

How cities are reinventing the public-private partnership − Four lessons from around the globe

Dec 17, 2024 — Atlanta

The Ruta N partnership in Medellín, Colombia, generated thousands of jobs. Jorge Calle/Anadolu Agency via Getty Images

Cities tackle a vast array of responsibilities – from building transit networks to running schools – and sometimes they can use a little help. That’s why local governments have long teamed up with businesses in so-called public-private partnerships. Historically, these arrangements have helped cities fund big infrastructure projects such as bridges and hospitals.

However, our analysis and research show an emerging trend with local governments engaged in private-sector collaborations – what we have come to describe as “community-centered, public-private partnerships,” or CP3s. Unlike traditional public-private partnerships, CP3s aren’t just about financial investments; they leverage relationships and trust. And they’re about more than just building infrastructure; they’re about building resilient and inclusive communities.

As the founding executive director of the Partnership for Inclusive Innovation, based out of the Georgia Institute of Technology, I’m fascinated with CP3s. And while not all CP3s are successful, when done right they offer local governments a powerful tool to navigate the complexities of modern urban life.

Together with international climate finance expert Andrea Fernández of the urban climate leadership group C40, we analyzed community-centered, public-private partnerships across the world and put together eight case studies. Together, they offer valuable insights into how cities can harness the power of CP3s.

READ THE FULL ARTICLE >>

(The Conversation, Dec 16, 2024)

Walter Rich

POSTPONED! Atlanta Veterans Affairs Medical Center Info Session

POSTPONED - Due to expected severe winter weather, the January 21 event will be rescheduled. Stay tuned for the new date!

The Atlanta Veterans Affairs Medical Center (VAMC) Research office will hold an in-person info session for Georgia Tech faculty, research scientists, and postdocs to learn about grant and collaboration opportunities.

Speakers/Panelists

Membrane Biosensor Wins Convergence Innovation Competition in Asia

Dec 11, 2024 — Taipei, Taiwan

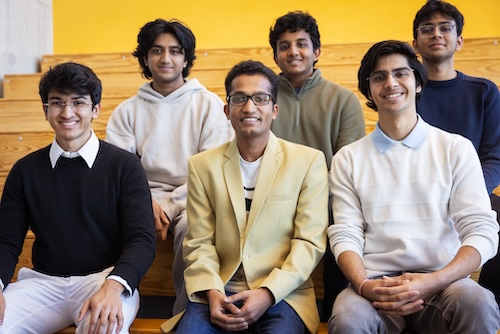

Winning check presented to Team Membrane Biosensor. Pictured left-to-right: Michael Best, Three Students from Team Membrane Sensor, and Shelton Chan.

Team Membrane Biosensor from Yuan Ze University, Taiwan won the Georgia Tech Institute for People and Technology’s (IPaT) annual Convergence Innovation Competition (CIC) held for the first time in Taipei, Taiwan, December 7, 2024.

The winning team members were Jia-Wei Chen, Hsu-Hung Kuo, Ngoc-Ngan Dao, and Ngo-My-Uyen Nguyen. The winning team won $2,000 dollars plus each team member were given ACER laptops and other prizes. The team’s faculty sponsor was Alex Wei, dean of the Industrial Academy at Yuan Ze University.

Their innovative membrane biosensor platform offered a rapid, accurate, and cost-effective solution for disease detection, revolutionizing diagnostic systems, and enabling early intervention for improved patient outcomes and control the pandemic.

CIC is a competition recognizing student innovation and entrepreneurship responding to today’s global challenges and opportunities. Founded in 2007 in Atlanta, Georgia, CIC is organized by IPaT at the Georgia Institute of Technology.

This year, the competition expanded globally to Asia to forge new partnerships and foster more collaborations with universities across the Asian continent. IPaT’s CIC Asia Faculty Fellows helped cultivate team projects and the students so they could showcase their innovative ideas in this competition.

“The CIC students, the competition finale, and the forum all far exceeded my expectations,” said IPaT executive director Michael Best. “All four of the student finalist projects represented the very best in people-centered technologies responding to global challenges.”

CIC Asia is distinct in how it brings teams from multiple countries together to interact and network. Most innovation competitions are single university or country.

The three remaining finalist teams each received $1,000 dollars in prize money. The CIC Asia finalist team projects and team members are shown below:

- BurnUp was a project from the students at Fulbright University Vietnam. Their project aimed to create a product that protects motorbikes' engines from water penetrating through the exhaust pipe during heavy rain and small floods.

Team members included: Võ Ngọc Đan Khuê, Trần Thanh Tùng, Trương Công Gia Hiếu, Phan Xuân Quang, Trần Nam Anh. The team’s faculty sponsor was Lan Phan, head of the center, Center for Entrepreneurship & Innovation at Fulbright University Vietnam.

- GLU@U is a project from a student team at Universiti Putra Malaysia. It is a smart management system for people with abnormal sugar metabolism (i.e. diabetes). It integrates three modules: smart hardware, intelligent data management analysis + decision-making system, and medical passport care management. It uses technologies such as rtCGM, AI, cloud computing, and the Internet of Things to integrate the collection and analysis of relevant user data, the hospital-side SaaS system, and the personal health management app to form a closed loop of digital health monitoring and management inside and outside the hospital. The medical care operation and service system built by GLU@U, as well as the Internet cloud computing platform support system, constitute the full-scene, multi-dimensional operation of GLU@U's "artificial intelligence + chronic disease" intelligent monitoring and digital medical and health management.

Team members included: Jiao Fenglei, Zhang Hua, Jiang Anqi. The team’s faculty sponsor was Iskandar Ishak, associate professor of Computer Science at Universiti Putra Malaysia.

- Guardian Crossing is a project from a student team at Universiti Tenaga Nasional. Guardian Crossing is a safety device that leverages deep learning to enhance indicators aimed in reducing accident risk for pedestrians with limited ability when crossing the road.

Team members included: Nur Zafirah binti Mohd Zaini, Wan Qistina binti Wan Izahan Zameree, Syabil Fikri bin Sabri,Muhammad Danial bin Noor Shamsudin. The team’s faculty sponsor was Nur Laila Ab Ghani, lecturer of infomatics at Universiti Tenaga Nasional.

Global Technology Strategy and Workforce Development Forum

The CIC event took place alongside the Global Technology Strategy and Workforce Development Forum which was also organized by IPaT. The forum featured panel discussions on innovation and entrepreneurship, talent development, artificial intelligence (AI), and sustainable business practices. Close to 200 leaders from industry, academia, civil society, and government across Asia attended the forum and witnessed the CIC students presentations and award ceremony.

Prominent figures from Taiwan’s industry, government, academia, and research sectors participating in the forum included Liu Cheng, vice president of Tunghai University; Chang Ruey-Shiong, former president of National Taipei University of Business; Cai Qiyan, CIO of Taiwan Mobile; Albert Weng, Chairman and CEO Assistant of Qisda Corporation; Nicole Chan, chairwoman of the Artificial Intelligence Foundation; and Kai Hua, Chief Technology Officer of Microsoft Taiwan.

The event was also co-hosted by the Lee Kuan Yew Technology Development Foundation, and the Southeast Asia Impact Alliance according to Shelton Chan, managing director for international development, Asia region, with the Georgia Institute of Technology.

The Forum was mainly three panels, one on AI and sustainability, one on workforce development, and one on innovation and entrepreneurship. Panelists were a diverse group of university leaders, industry leaders, policy innovators, and included Georgia Tech faculty and alumni.

“CIC Asia and the Global Technology Strategy and Workforce Development Forum event illustrate ways that IPaT continues to grow Georgia Tech’s global influence,” said Best. “The audience was made up of high-level movers and shakers in the Asian technology ecosystem and I think we really impressed them.”

Pictures of CIC Asia and the Forum can be viewed here.

###

Group picture of participating students, faculty and some attendees to CIC Asia 2024 in Taipei, Taiwan.

Walter Rich

Neuro Next Seminar

Margaret Kosal

Associate Professor

Sam Nunn School of International Affairs

Georgia Tech

To participate virtually, CLICK HERE

Speaker Bio

New Dataset Takes Aim at Subjective Misinformation in Earnings Calls and Other Public Hearings

Dec 03, 2024 —

Georgia Tech researchers have created a dataset that trains computer models to understand nuances in human speech during financial earnings calls. The dataset provides a new resource to study how public correspondence affects businesses and markets.

SubjECTive-QA is the first human-curated dataset on question-answer pairs from earnings call transcripts (ECTs). The dataset teaches models to identify subjective features in ECTs, like clarity and cautiousness.

The dataset lays the foundation for a new approach to identifying disinformation and misinformation caused by nuances in speech. While ECT responses can be technically true, unclear or irrelevant information can misinform stakeholders and affect their decision-making.

Tests on White House press briefings showed that the dataset applies to other sectors with frequent question-and-answer encounters, notably politics, journalism, and sports. This increases the odds of effectively informing audiences and improving transparency across public spheres.

The intersecting work between natural language processing and finance earned the paper acceptance to NeurIPS 2024, the 38th Annual Conference on Neural Information Processing Systems. NeurIPS is one of the world’s most prestigious conferences on artificial intelligence (AI) and machine learning (ML) research.

"SubjECTive-QA has the potential to revolutionize nowcasting predictions with enhanced clarity and relevance,” said Agam Shah, the project’s lead researcher.

[MICROSITE: Georgia Tech at NeurIPS 2024]

“Its nuanced analysis of qualities in executive responses, like optimism and cautiousness, deepens our understanding of economic forecasts and financial transparency."

SubjECTive-QA offers a new means to evaluate financial discourse by characterizing language's subjective and multifaceted nature. This improves on traditional datasets that quantify sentiment or verify claims from financial statements.

The dataset consists of 2,747 Q&A pairs taken from 120 ECTs from companies listed on the New York Stock Exchange from 2007 to 2021. The Georgia Tech researchers annotated each response by hand based on six features for a total of 49,446 annotations.

The group evaluated answers on:

- Relevance: the speaker answered the question with appropriate details.

- Clarity: the speaker was transparent in the answer and the message conveyed.

- Optimism: the speaker answered with a positive outlook regarding future outcomes.

- Specificity: the speaker included sufficient and technical details in their answer.

- Cautiousness: the speaker answered using a conservative, risk-averse approach.

- Assertiveness: the speaker answered with certainty about the company’s events and outcomes.

The Georgia Tech group validated their dataset by training eight computer models to detect and score these six features. Test models comprised of three BERT-based pre-trained language models (PLMs), and five popular large language models (LLMs) including Llama and ChatGPT.

All eight models scored the highest on the relevance and clarity features. This is attributed to domain-specific pretraining that enables the models to identify pertinent and understandable material.

The PLMs achieved higher scores on the clear, optimistic, specific, and cautious categories. The LLMs scored higher in assertiveness and relevance.

In another experiment to test transferability, a PLM trained with SubjECTive-QA evaluated 65 Q&A pairs from White House press briefings and gaggles. Scores across all six features indicated models trained on the dataset could succeed in other fields outside of finance.

"Building on these promising results, the next step for SubjECTive-QA is to enhance customer service technologies, like chatbots,” said Shah, a Ph.D. candidate studying machine learning.

“We want to make these platforms more responsive and accurate by integrating our analysis techniques from SubjECTive-QA."

SubjECTive-QA culminated from two semesters of work through Georgia Tech’s Vertically Integrated Projects (VIP) Program. The VIP Program is an approach to higher education where undergraduate and graduate students work together on long-term project teams led by faculty.

Undergraduate students earn academic credit and receive hands-on experience through VIP projects. The extra help advances ongoing research and gives graduate students mentorship experience.

Computer science major Huzaifa Pardawala and mathematics major Siddhant Sukhani co-led the SubjECTive-QA project with Shah.

Fellow collaborators included Veer Kejriwal, Abhishek Pillai, Rohan Bhasin, Andrew DiBiasio, Tarun Mandapati, and Dhruv Adha. All six researchers are undergraduate students studying computer science.

Sudheer Chava co-advises Shah and is the faculty lead of SubjECTive-QA. Chava is a professor in the Scheller College of Business and director of the M.S. in Quantitative and Computational Finance (QCF) program.

Chava is also an adjunct faculty member in the College of Computing’s School of Computational Science and Engineering (CSE).

"Leading undergraduate students through the VIP Program taught me the powerful impact of balancing freedom with guidance,” Shah said.

“Allowing students to take the helm not only fosters their leadership skills but also enhances my own approach to mentoring, thus creating a mutually enriching educational experience.”

Presenting SubjECTive-QA at NeurIPS 2024 exposes the dataset for further use and refinement. NeurIPS is one of three primary international conferences on high-impact research in AI and ML. The conference occurs Dec. 10-15.

The SubjECTive-QA team is among the 162 Georgia Tech researchers presenting over 80 papers at NeurIPS 2024. The Georgia Tech contingent includes 46 faculty members, like Chava. These faculty represent Georgia Tech’s Colleges of Business, Computing, Engineering, and Sciences, underscoring the pertinence of AI research across domains.

"Presenting SubjECTive-QA at prestigious venues like NeurIPS propels our research into the spotlight, drawing the attention of key players in finance and tech,” Shah said.

“The feedback we receive from this community of experts validates our approach and opens new avenues for future innovation, setting the stage for transformative applications in industry and academia.”

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Researchers Say AI Copyright Cases Could Have Negative Impact on Academic Research

Nov 21, 2024 — Atlanta

Deven Desai and Mark Riedl have seen the signs for a while.

Two years since OpenAI introduced ChatGPT, dozens of lawsuits have been filed alleging technology companies have infringed copyright by using published works to train artificial intelligence (AI) models.

Academic AI research efforts could be significantly hindered if courts rule in the plaintiffs' favor.

Desai and Riedl are Georgia Tech researchers raising awareness about how these court rulings could force academic researchers to construct new AI models with limited training data. The two collaborated on a benchmark academic paper that examines the landscape of the ethical issues surrounding AI and copyright in industry and academic spaces.

“There are scenarios where courts may overreact to having a book corpus on your computer, and you didn’t pay for it,” Riedl said. “If you trained a model for an academic paper, as my students often do, that’s not a problem right now. The courts could deem training is not fair use. That would have huge implications for academia.

“We want academics to be free to do their research without fear of repercussions in the marketplace because they’re not competing in the marketplace,” Riedl said.

Desai is the Sue and John Stanton Professor of Business Law and Ethics at the Scheller College of Business. He researches how business interests and new technology shape privacy, intellectual property, and competition law. Riedl is a professor at the College of Computing’s School of Interactive Computing, researching human-centered AI, generative AI, explainable AI, and gaming AI.

Their paper, Between Copyright and Computer Science: The Law and Ethics of Generative AI, was published in the Northwestern Journal of Technology and Intellectual Property on Monday.

Desai and Riedl say they want to offer solutions that balance the interests of various stakeholders. But that requires compromise from all sides.

Researchers should accept they may have to pay for the data they use to train AI models. Content creators, on the other hand, should receive compensation, but they may need to accept less money to ensure data remains affordable for academic researchers to acquire.

Who Benefits?

The doctrine of fair use is at the center of every copyright debate. According to the U.S. Copyright Office, fair use permits the unlicensed use of copyright-protected works in certain circumstances, such as distributing information for the public good, including teaching and research.

Fair use is often challenged when one or more parties profit from published works without compensating the authors.

Any original published content, including a personal website on the internet, is protected by copyright. However, copyrighted material is republished on websites or posted on social media innumerable times every day without the consent of the original authors.

In most cases, it’s unlikely copyright violators gained financially from their infringement.

But Desai said business-to-business cases are different. The New York Times is one of many daily newspapers and media companies that have sued OpenAI for using its content as training data. Microsoft is also a defendant in The New York Times’ suit because it invested billions of dollars into OpenAI’s development of AI tools like ChatGPT.

“You can take a copyrighted photo and put it in your Twitter post or whatever you want,” Desai said. “That’s probably annoying to the owner. Economically, they probably wanted to be paid. But that’s not business to business. What’s happening with Open AI and The New York Times is business to business. That’s big money.”

OpenAI started as a nonprofit dedicated to the safe development of artificial general intelligence (AGI) — AI that, in theory, can rival human thinking and possess autonomy.

These AI models would require massive amounts of data and expensive supercomputers to process that data. OpenAI could not raise enough money to afford such resources, so it created a for-profit arm controlled by its parent nonprofit.

Desai, Riedl, and many others argue that OpenAI ceased its research mission for the public good and began developing consumer products.

“If you’re doing basic research that you’re not releasing to the world, it doesn’t matter if every so often it plagiarizes The New York Times,” Riedl said. “No one is economically benefitting from that. When they became a for-profit and produced a product, now they were making money from plagiarized text.”

OpenAI’s for-profit arm is valued at $80 billion, but content creators have not received a dime since the company has scraped massive amounts of copyrighted material as training data.

The New York Times has posted warnings on its sites that its content cannot be used to train AI models. Many other websites offer a robot.txt file that contains instructions for bots about which pages can and cannot be accessed.

Neither of these measures are legally binding and are often ignored.

Solutions

Desai and Riedl offer a few options for companies to show good faith in rectifying the situation.

- Spend the money. Desai says Open AI and Microsoft could have afforded its training data and avoided the hassle of legal consequences.

“If you do the math on the costs to buy the books and copy them, they could have paid for them,” he said. “It would’ve been a multi-million dollar investment, but they’re a multi-billion dollar company.”

- Be selective. Models can be trained on randomly selected texts from published works, allowing the model to understand the writing style without plagiarizing.

“I don’t need the entire text of War and Peace,” Desai said. “To capture the way authors express themselves, I might only need a hundred pages. I’ve also reduced the chance that my model will cough up entire texts.”

- Leverage libraries. The authors agree libraries could serve as an ideal middle ground as a place to store published works and compensate authors for access to those works, though the amount may be less than desired.

“Most of the objections you could raise are taken care of,” Desai said. “They are legitimate access copies that are secure. You get access to only as much as you need. Libraries at universities have already become schools of information.”

Desai and Riedl hope the legal action taken by publications like The New York Times will send a message to companies that develop AI tools to pump the breaks. If they don’t, researchers uninterested in profit could pay the steepest price.

The authors say it’s not a new problem but is reaching a boiling point.

“In the history of copyright, there are ways that society has dealt with the problem of compensating creators and technology that copies or reduces your ability to extract money from your creation,” Desai said. “We wanted to point out there’s a way to get there.”

Nathan Deen

Communications Officer

School of Interactive Computing