Accelerating Discovery With AI

May 18, 2026 —

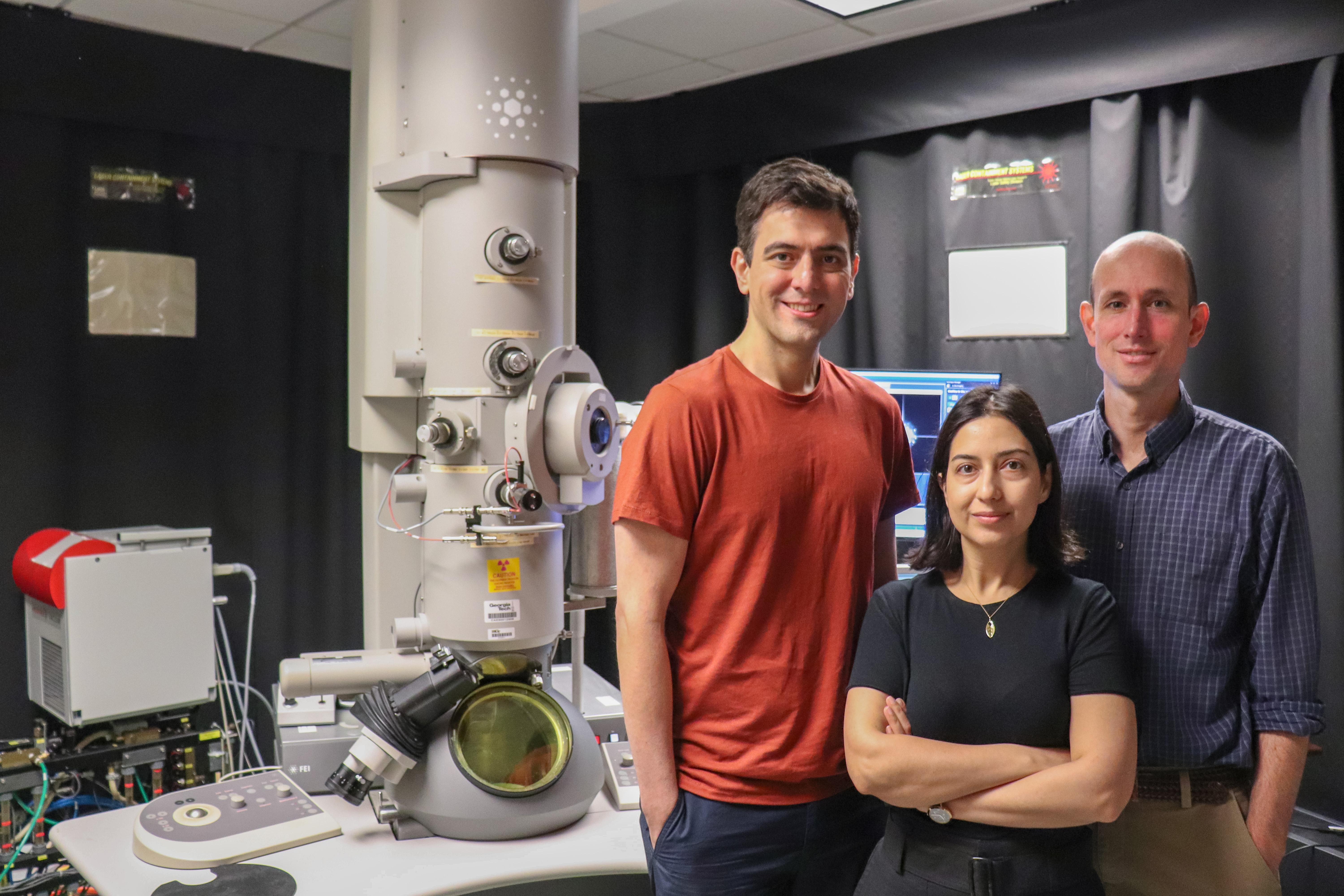

A photo of Vida Jamali, assistant professor the School of Chemical and Biomolecular Engineering; Amirali Aghazadeh, assistant professor in the School of Electrical and Computer Engineering; and Josh Kacher, associate professor in the School of Materials Science and Engineering standing in front of a TEM at Georgia Tech.

Scientific discovery is often portrayed as the result of long hours alone in a lab, but true science is inherently collaborative. The most robust experimental processes are developed through partnerships across multiple areas of research. The need for specialized, multidisciplinary teams slows experiment design, execution, data analysis, and process updates, delaying technological validation and deployment. But if the increasingly automated tools scientists already use in the lab could contribute to this team process of experimental design, the timeline for these goals could be greatly accelerated.

This concept of “lab tool as lab assistant” is the premise of a recent paper in npj | Computational Materials titled “Thinking Microscopes: Agentic AI and the Future of Electron Microscopy,” by Vida Jamali, assistant professor the School of Chemical and Biomolecular Engineering; Amirali Aghazadeh, assistant professor in the School of Electrical and Computer Engineering; and Josh Kacher, associate professor in the School of Materials Science and Engineering.

In the paper, the team introduces the concept of “thinking electron microscopes,” in which agentic AI systems are directly integrated with the instrument. This allows microscopes to move beyond their conventional role as characterization tools and toward functioning as co-scientists for human users.

Drawing on advances in specialized large language models, or LLMs, that demonstrate their ability to collaborate, reason over data, and integrate prior knowledge, the team envisions specialized LLM-based agents assigned to specific roles and areas of knowledge expertise. By explicitly incorporating domain knowledge into specialized agents and distributing information across multiple agents with focused expertise, the approach enables parallel evaluation of competing hypotheses, clearer separation of roles — such as planning, simulation, and critique — and more transparent and robust reasoning.

Within the experimental pipeline, these agents can analyze materials’ properties, physical data, chemical processes, and other relevant parameters. They could also collaborate with an agent that specializes in experimental design, refining iterative closed-loop experimentation, and real-time scientific discovery.

Although the research focuses on AI collaboration, the team notes that human researchers must retain accountability for the accuracy and integrity of both the experimental process and the results reported. This oversight begins with advocating for greater open access to research materials in all formats, building community-driven data repositories, and adopting standardization in how experimental parameters and metadata are reported. Equally important, researchers should be willing to report data from failed experiments as well as successful outcomes. Finally, organizations should work together to standardize secure APIs that enable shared, remote access to infrastructure across distances.

We see this as a step toward scientific instruments that do more than acquire data; systems that can reason over experiments, adapt measurements, and participate in the scientific discovery process alongside researchers. - Vida Jamali, assistant professor the School of Chemical and Biomolecular Engineering

The team is already developing these systems by connecting cloud-based, agentic infrastructures to microscopes at the Institute for Matter and Systems at Georgia Tech. With the addition of agentic AI, the goal is to accelerate discovery and engineering of new nanoscale materials for energy and quantum applications, as well as advance capabilities in cryo-electron microscopy and structural biology. These tools can optimize data collection, link real-time microscope observations with structural models of proteins, and dynamically adjust and prioritize experiments. The team sees this work as the first step toward the next generation of “thinking” electron microscopes, as well as an advancement in scientific discovery across domains.

- Christa M. Ernst

This research is supported by the Institute for Data Engineering and Science and the Institute for Matter and Systems

Original Publication

Jamali, V., Aghazadeh, A. & Kacher, J. Thinking microscopes: agentic AI and the future of electron microscopy. npj Computational Materials 12, 149 (2026). https://doi.org/10.1038/s41524-026-02077-y

IDEaS Over POPS!

Come celebrate the last day of classes with the Institute for Data Engineering and Science community. Students are especially welcome, and we are expecting students from Data Science at GT, the Supercomputing club, and the Data Safety Initiative. Come find out how our students found the spring semester, and their views about data science, ML, and AI in education.

IDEaS + AI-ALOE Distinguished Lecture: AI and Lifelong Learning: Building the 60-Year Curriculum for the Fourth Industrial Revolution

Speaker: Sae Schatz, Ph.D. | Founder and CEO, the Knowledge Forge LLC

Abstract

IDEaS Upskilling Workshop on AI for Research

Join us for an afternoon of demonstrations on how AI can be used in research. Topics will include using AI for visualization and creating high-quality graphics, as an aid to learn new software, and as an assistant in writing code. The workshop will also discuss how to integrate AI with high-performance computing platforms like PACE, and introduce Georgia Tech resources and policies. The workshop is primarily aimed at graduate students and postdocs, but other members of the Georgia Tech community are welcome as well.

Amazon Bedrock AgentCore Workshop: From Prototype to Production

This comprehensive hands-on workshop will guide participants through building a Mortgage Assistant Agent that demonstrates the full spectrum of AgentCore capabilities - from basic conversational AI to enterprise-grade deployment with memory, security, and observability.

Researchers Create First AI for Generative Polymer Design

Mar 24, 2026 —

Researchers have created a chemical language AI model to generate new polymer structures based on the properties those polymers need to exhibit. Led by Rampi Ramprasad, standing, the team included postdoctoral scholar Wei Xiong, Ph.D. student Anagha Savit, and research scientist Harikrishna Sahu, who are seated left to right. (Photo: Candler Hobbs)

The words on this page mean something because they are assembled in a particular order and follow the complex rules of grammar and syntax. Creating new chemical polymers follows a similar kind of structure, with rules about what elements and groups of atoms go together and how to assemble them to make sense.

Thinking about polymers in that way has led Georgia Tech materials scientists to create new generative artificial intelligence tools that are like Claude or ChatGPT for new materials.

These are the first foundational models for generative polymer design that have also been validated through physical experiments: users specify the properties they need in a polymer and the model will suggest a chemical structure.

Led by Regents’ Entrepreneur Rampi Ramprasad, the researchers described their latest model this month in the Nature journal npj Artificial Intelligence — including a test material they created and validated in the lab to prove the models work.

Joshua Stewart

College of Engineering

David Sherrill Named Executive Director of the Institute for Data Engineering and Science

Feb 26, 2026 —

Georgia Tech has appointed David Sherrill as executive director of the Institute for Data Engineering and Science (IDEaS), effective March 1. Sherrill is a Regents' Professor in the School of Chemistry and Biochemistry with a joint appointment in the School of Computational Science & Engineering. Sherrill has served as associate director for IDEaS since its founding in 2016 and as interim director since January 1, 2025.

“I’m thrilled to see Professor Sherrill tackle this role for the coming 5 years. He understands the rapidly evolving opportunities to apply AI and data science approaches to the diversity of research conducted by Georgia Tech faculty and students, and has a strong agenda to help our researchers make the most of this explosive change in the research landscape.” Said V.P. of Interdisciplinary Research, Julia Kubanek. “He also has deep experience with team building and management which will position IDEaS favorably.”

As executive director, Sherrill will guide IDEaS’ current initiatives, which include the Microsoft CloudHub program that supports innovative applications in Generative Artificial Intelligence, and provide oversight and support for the joint College of Computing / IDEaS Center for Artificial Intelligence in Science and Engineering (ARTISAN), which provides Georgia Tech faculty and research engineers expert support staff, needed cyberinfrastructure, software resources, and advice to assist faculty with projects using large data sets or using AI and machine learning to drive discovery.

Sherrill will also the lead the launch of a new strategic vision, emphasizing the Georgia Tech research community’s expertise in the development of AI and ML techniques and their application to problems in science and engineering, high performance computing, and academic software. Sherrill will focus on internal and external partnerships at IDEaS, creating new collaborative efforts in areas such as economics, policy, and the arts and humanities. He will also work to strengthen current connections across Georgia Tech’s Colleges, Interdisciplinary Research Institutes (IRIs), and the Georgia Tech Research Institute (GTRI).

“It’s a great honor to be named the next executive director of IDEaS,” said Sherrill. “Georgia Tech has world-class faculty and students, and an unparalleled spirit of collaboration. By bringing together faculty from across campus and working together with some of the amazing student groups, we can leverage the power of AI to accelerate our research and maximize our impact. IDEaS will continue to run upskilling workshops to help our campus keep pace with the rapid changes in AI.”

Sherrill is an active promoter of education in computational quantum chemistry, as well as a strong voice for the benefits of open-source software for research acceleration. He was named Outreach Volunteer of the Year by the Georgia Section of the American Chemical Society in 2017, and he is the lead principal investigator of the Psi open-source quantum chemistry program.

Sherrill earned a B.S. in chemistry from MIT in 1992 and a Ph.D. in chemistry from the University of Georgia in 1996. From 1996-1999 Sherril was an NSF Postdoctoral Fellow at the University of California, Berkeley.

Sherrill is Fellow of the American Association for the Advancement of Science (AAAS), the American Chemical Society, and the American Physical Society, and he has been Associate Editor of the Journal of Chemical Physics since 2009. Sherrill has received a Camille and Henry Dreyfus New Faculty Award, the International Journal of Quantum Chemistry Young Investigator Award, an NSF CAREER Award, and Georgia Tech's W. Howard Ector Outstanding Teacher Award. In 2023, he received the Herty Medal from the Georgia Section of the American Chemical Society, and in 2024, he was elected to the International Academy of Quantum Molecular Science.

- Christa M. Ernst

IDEaS Over Coffee

Discussion Topic TBA

IDEaS-affiliated students and staff are also welcome.

If you do not have access to Coda, near the beginning of the event we will have a staff member or student waiting by the reception desk near the elevators to escort people up.

IDEaS Over Coffee

Discussion topic TBA

IDEaS-affiliated students and staff are also welcome.

If you do not have access to Coda, near the beginning of the event we will have a staff member or student waiting by the reception desk near the elevators to escort people up.

For questions please contact Ashley.edwards@gatech.edu

IDEaS Over Coffee: Tips for AI Tools

AI is charging forward with unprecedented speed and impact.

Please join us on Monday 02/23 at 2pm for open discussions about the direction and development of AI.

What AI tools have you tried for writing, finding literature citations, making presentations, coding, or automating research tasks? Come share your experiences and learn from others over coffee with the IDEaS community.